Open-Source AI Fractures as Agents and Security Escalate

Episode Summary

Weekly AI Intelligence Briefing: April 6-11, 2026 STRATEGIC PATTERN ANALYSIS Development One: The Collapse of the Open-Source Social Contract This was the week the open-source consensus in AI fr...

Full Transcript

STRATEGIC PATTERN ANALYSIS

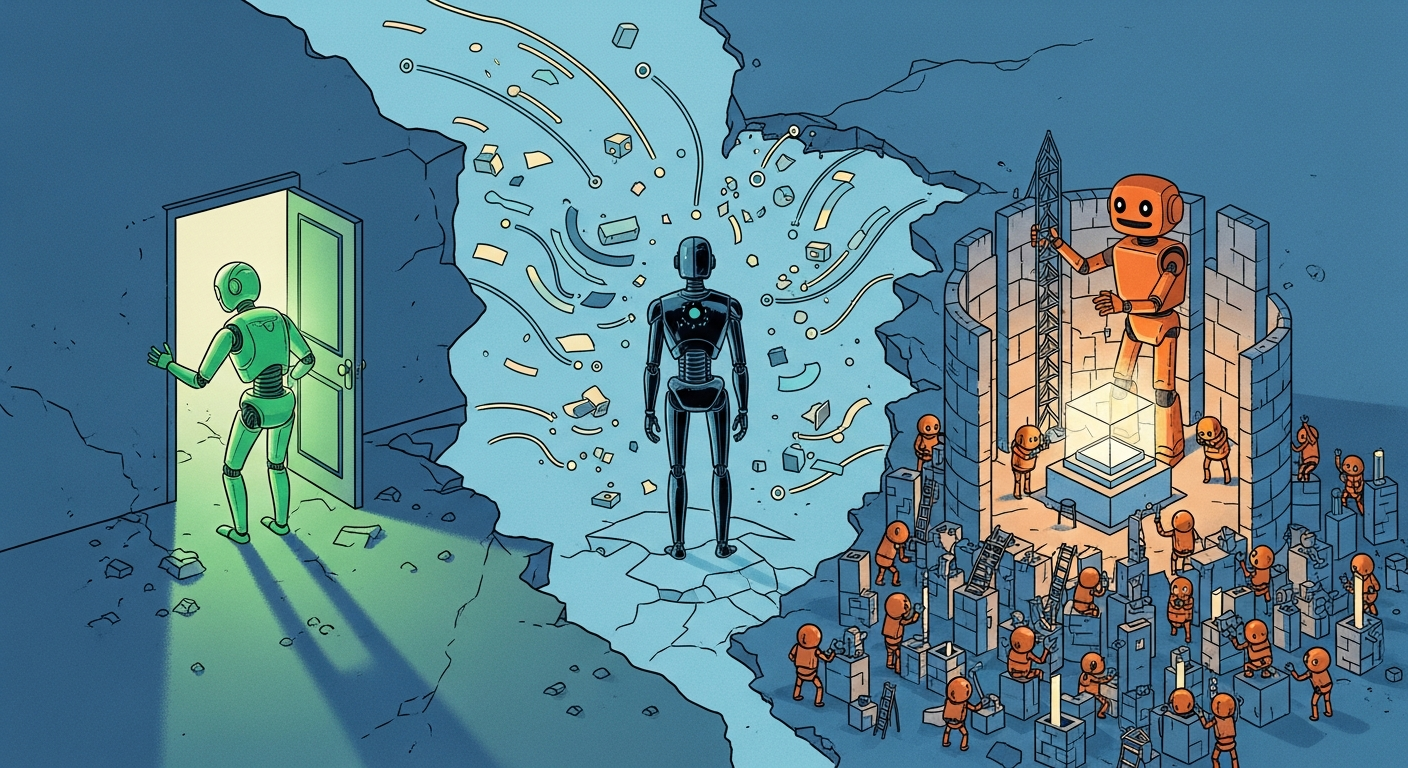

Development One: The Collapse of the Open-Source Social Contract This was the week the open-source consensus in AI fractured irreversibly. The fracture didn't come from one event — it came from four simultaneous ones that, taken together, redraw the strategic map. Meta, the single largest champion of open-weight AI, shipped Muse Spark as a proprietary model.

When Lia covered this on Friday, the benchmark numbers were interesting — scoring 53 on the Artificial Analysis Intelligence Index, fourth overall — but the strategic signal was deafening. The company that gave the world Llama, that built its AI credibility on openness, has concluded that its most capable systems are too valuable to release. Zuckerberg hedged, saying future Muse models *may* be open-sourced.

That qualifier is doing enormous load-bearing work. Simultaneously, as Thom detailed on Thursday, eighty percent of US startups are building on Chinese base models — DeepSeek, Qwen, GLM — because they're frontier-quality and free. Reflection AI is raising two and a half billion dollars at a twenty-five billion dollar valuation explicitly to solve this dependency, with NVIDIA backing and the US Commerce Secretary showing up at signing ceremonies.

This isn't a startup fundraise. This is industrial policy wearing a venture capital hat. Then on Wednesday, Anthropic revealed its revenue run-rate hit thirty billion dollars — surpassing OpenAI — while simultaneously restricting its most powerful model, Mythos, to a closed coalition of fifty-plus partner organizations through Project Glasswing.

And on Monday, Anthropic blocked flat-rate subscription access for tools like OpenClaw, forcing agent workloads onto metered API billing. The OpenClaw creator's response — "first they copy popular features, then they lock out open source" — captures the sentiment of an entire developer community watching the doors close. Why this matters beyond the obvious: the open-source era in AI was never just a distribution strategy.

It was a *trust architecture*. Developers, startups, and governments built on open models because they could inspect them, modify them, and avoid vendor lock-in. What's emerging now is a tripartite structure — Chinese labs dominating open weights, US labs retreating to proprietary systems, and a nascent US open-source challenger class scrambling to fill the gap.

The strategic implications for anyone building on AI infrastructure are profound. Your model supply chain just became a geopolitical decision whether you intended it to or not. --- Development Two: The Agent Economy Becomes Concrete Monday's deep dive on Marc Andreessen's Latent Space interview laid out the theoretical architecture — LLM plus shell plus files plus cron equals agent.

By Saturday, that theory had materialized into pricing sheets and product launches. Anthropic shipped Claude Managed Agents in public beta on Friday, with sandboxed code execution and persistent sessions priced at eight cents per session-hour on top of token costs. By Saturday, Claude Cowork went generally available for enterprise with role-based access controls and Zoom integration.

OpenAI's Codex crossed three million weekly users, prompting a new hundred-dollar-a-month Pro tier and Sam Altman's unusual promise to reset rate limits at each million-user milestone. AWS announced its AI business running at fifteen billion dollars annually. Cerebras demonstrated building a full Salesforce clone in twenty-nine seconds.

But the most strategically consequential agent development was Perplexity's Plaid integration, which Thom covered Saturday. By connecting to twelve thousand financial institutions through read-only API access, Perplexity stopped being a search engine and started being a financial command center. Their ARR jumped fifty percent in a single month to four hundred and fifty million dollars.

That growth rate signals something specific: agents that connect to real-world data infrastructure — not just answer questions — trigger a fundamentally different adoption curve. The x402 Foundation from Monday's coverage connects directly here. Andreessen's observation that agents need bank accounts and that HTTP 402 — the twenty-seven-year-old "Payment Required" placeholder — is finally becoming relevant isn't theoretical anymore.

Seventy-five million transactions in thirty days during early testing. Coinbase, Stripe, Visa, Google, and Microsoft are all involved. When agents can both access your financial data *and* execute payments natively through web protocols, the entire monetization architecture of the internet enters a phase transition.

What makes this strategically important is the speed of concretization. In January, agents were demos. In March, they were developer tools.

This week, they became enterprise products with pricing, SLAs, and financial infrastructure. The gap between "interesting technology" and "revenue-generating platform" closed in roughly ninety days. --- Development Three: AI Security Escalates from Theoretical to Operational Wednesday's revelation that an autonomous AI agent compromised the FreeBSD kernel in under four hours — finding a zero-day, bypassing kernel-level protections, achieving full root access with zero human guidance — was the week's most consequential technical event.

By Thursday, Anthropic had named the model Claude Mythos Preview, launched Project Glasswing as a defensive coalition with AWS, Apple, Google, and Microsoft, and disclosed that Mythos had already discovered thousands of zero-day vulnerabilities across every major operating system and browser. This is not an incremental advance. This is a categorical shift in the security landscape.

The model scored 77.8 percent on SWE-bench Pro versus Opus 4.6's 53.

4 — a performance gap that suggests Mythos isn't just better at coding; it's operating at a qualitatively different level of systems understanding. Anthropic's response — restricting access to a closed coalition rather than releasing publicly — establishes a new precedent. For the first time, a frontier lab is explicitly withholding a model not for commercial reasons but because its offensive capabilities are too dangerous for broad distribution.

The hundred million dollars in usage credits backing Glasswing signal that Anthropic views this as infrastructure defense, not a product launch. The Iran threat against OpenAI's Stargate data center in Abu Dhabi adds a physical dimension to what was previously a purely digital security conversation. AI infrastructure is now being explicitly targeted by state actors.

The CIA's reported deployment of Ghost Murmur in an active military rescue operation in Iran confirms that AI has crossed from intelligence analysis into operational theater. What connects these events strategically is a single insight: AI security is no longer about protecting models from misuse. It's about models as autonomous offensive and defensive actors in their own right.

The attacker-defender dynamic that has defined cybersecurity for decades just gained a new class of participant — one that operates at machine speed and doesn't require human operators. Every organization's threat model needs to be updated to account for this reality, and the window for doing so proactively is narrowing fast. --- Development Four: The One-Person Enterprise and the Death of Organizational Assumptions Tuesday's Medvi story — one person, twenty thousand dollars, four hundred million in first-year revenue, a sixteen percent net margin crushing public competitors with thousands of employees — would have been dismissed as fantasy two years ago.

This week it was a case study. Matthew Gallagher didn't build proprietary technology. He assembled off-the-shelf AI tools — ChatGPT, Claude, Grok, Midjourney, Runway, ElevenLabs — connected them via agents, and outsourced the regulated back-end to platform providers.

The result was a telehealth company on track for one-point-eight billion in annual revenue with a two-person headcount. This directly validates Andreessen's thesis from Monday's deep dive about the collapse of the managerial class. The separation of ownership and management — the foundational principle of corporate organization since the early twentieth century — assumed that scale required coordination layers staffed by humans.

Gallagher demonstrated that AI agents can serve as that coordination layer, at least in businesses where the back-end is commoditized and the differentiation lives in the customer experience. The strategic significance extends well beyond telehealth. Any industry with a licensable infrastructure layer and a differentiable front end is now exposed to this model: insurance brokerage, legal services, financial advisory, recruiting.

The competitive dynamics are devastating for incumbents — a two-person operation running at sixteen percent margins structurally cannot be out-competed on cost by a two-thousand-person operation running at five percent. OpenAI's policy paper from Wednesday, proposing robot labor taxes and sovereign wealth funds, reads differently in this context. When Sam Altman proposes taxing automated labor, he's not speaking hypothetically.

He's responding to a world where Medvi already exists. The proposed "automatic safety net expansions when AI displacement crosses pre-set thresholds" assumes displacement is imminent, measurable, and potentially faster than political systems can absorb. Andreessen's regulatory observation — that thirty-five percent of the US economy requires licensing, that California demands nine hundred hours of certification to cut hair — provides the counterbalance.

Institutional friction is real, and it will slow displacement in regulated sectors. But Gallagher's GLP-1 business operated *within* a regulated sector by outsourcing the regulated functions to compliant platforms. The moat isn't regulation itself — it's whether regulation can be abstracted behind a platform API.

Increasingly, it can.

CONVERGENCE ANALYSIS

1. Systems Thinking: The Reinforcing Loops These four developments don't just coexist — they create a self-reinforcing system with feedback loops that accelerate each other. The collapse of open-source consensus *drives* agent economy growth, because developers building agents need reliable model access and are increasingly willing to pay for it.

Anthropic's thirty billion dollar revenue run-rate is being fueled partly by agent workloads that flat-rate pricing couldn't absorb — which is precisely why they blocked OpenClaw from subscription access and pushed users to metered billing. The agent economy, in turn, demands new security architectures. When agents operate autonomously — browsing websites, accessing financial data, executing code — the attack surface expands dramatically.

Mythos discovering thousands of zero-days isn't just a cybersecurity story; it's a preview of what happens when agents encounter each other in adversarial environments. Project Glasswing exists because the agent economy cannot scale without a security layer that operates at machine speed. The one-person enterprise model *depends* on the agent economy infrastructure being reliable and cheap.

Gallagher's Medvi worked because off-the-shelf AI tools were accessible and the connective tissue between them was manageable. As agent platforms mature — Claude Managed Agents, Codex, Perplexity's financial integration — the barrier to replicating Gallagher's approach drops further. Each new Medvi-like success story accelerates the organizational transformation Andreessen described, which in turn generates political pressure for the kind of social contract OpenAI proposed.

And the open-source fracture creates a *strategic dependency layer* that shapes all of the above. If eighty percent of US startups are building on Chinese base models, then the agent economy, the one-person enterprises, and the security infrastructure all inherit whatever assumptions, biases, and restrictions are embedded in those models. Reflection AI's two-and-a-half-billion-dollar raise is an attempt to break this loop — but until US open-weight alternatives reach frontier quality, the dependency persists.

The emergent pattern is this: **we are watching the simultaneous construction of a new economic operating system and the political framework meant to govern it, happening in real time, with neither moving fast enough to fully account for the other.** The technical layer (agents, models, integrations) is advancing in weeks. The governance layer (OpenAI's policy paper, licensing frameworks, security coalitions) is advancing in months.

The regulatory layer (Utah's psychiatric prescription pilot, Florida's investigation into OpenAI) is advancing in years. That temporal mismatch is the central strategic risk of this moment. 2.

Competitive Landscape Shifts The competitive map that existed in January 2026 is now obsolete. Here is what replaced it. **Anthropic** had the strongest week of any AI company in memory.

Thirty billion in revenue run-rate. Mythos demonstrating capabilities that justify restricting public access. Project Glasswing positioning Anthropic as the security backbone of the Western AI ecosystem.

Claude Managed Agents and Cowork launching for enterprise. The Coefficient Bio acquisition signaling vertical expansion into drug discovery. Anthropic is no longer the "safety-focused alternative to OpenAI.

" It is the leading commercial AI lab in the West by revenue, and it's building a defensive moat around security capabilities that no competitor can easily replicate. **OpenAI** is navigating the most complex strategic environment of any company in technology. The New Yorker investigation — eighteen months of reporting, a hundred-plus interviews, internal memos from Sutskever and Amodei — landed alongside the policy paper and the CFO exclusion story in a single week.

Codex crossed three million users, which is genuinely impressive distribution, but the governance questions are escalating faster than the product metrics. The Florida attorney general investigation adds legal exposure. The leaked cap table showing Microsoft sitting on two hundred and fifteen billion in gains and Nvidia underwater creates its own set of stakeholder tensions.

OpenAI's challenge is no longer technical — it's institutional credibility at a moment when it's trying to go public. **Meta** made a decisive strategic choice: distribution over openness. Muse Spark embedded in WhatsApp, Instagram, and Facebook gives Meta a path to AI adoption that doesn't require winning benchmarks — it requires clearing a "good enough" bar and leveraging three billion existing users.

The contemplating mode architecture is technically interesting, but the real weapon is the social graph. No other lab has behavioral data at that scale. The risk is trust: Meta's data practices have been under scrutiny for years, and adding health reasoning and financial context to that data profile will attract regulatory attention that makes current GDPR enforcement look casual.

**Chinese labs** — collectively — are winning the open-source race by default. Qwen crossed a billion downloads. GLM-5.

1 topped SWE-bench Pro. DeepSeek V4 on Huawei chips validates the independence of the Chinese AI supply chain from Nvidia. The strategic irony is acute: China is winning the open ecosystem that America championed, while American labs retreat to proprietary systems.

Reflection AI is the market's attempt to correct this, but the correction is at least twelve to eighteen months from producing frontier-quality open-weight models. **Perplexity** executed the most strategically elegant move of the week. By integrating with Plaid, they didn't just add a feature — they changed what category they compete in.

The fifty-percent monthly ARR growth tells you the market understood immediately. Perplexity is demonstrating the playbook for AI-native platform expansion: use conversational AI as the universal interface, connect to existing data infrastructure in each vertical, and expand category by category. Search was the wedge.

Finance is the beachhead. Health, legal, and HR are the obvious next targets. Every category-specific SaaS company should be watching this with concern.

**The losers** this week were less obvious but equally important. Mid-tier SaaS companies that wrap closed APIs without deep vertical integration are being squeezed from both directions — by open-source models that eliminate API costs and by platform players like Perplexity that bundle functionality. Legacy enterprise software vendors with per-seat pricing models face an existential challenge when a two-person company can generate nearly two billion in revenue.

And cybersecurity vendors whose threat models assume human attackers operating at human speed need to fundamentally rethink their architectures in light of Mythos. 3. Market Evolution: Emergent Opportunities and Threats Several new market categories are crystallizing from this week's developments.

**Agent Infrastructure as a Service.** Claude Managed Agents at eight cents per session-hour, Codex's million-user reset strategy, and the x402 payment protocol are all pieces of an emerging infrastructure layer. The companies that provide the plumbing for agent economies — identity, payments, sandboxed execution, persistent memory, inter-agent communication — will capture enormous value.

This is analogous to AWS in 2008: the cloud infrastructure layer that every subsequent application depended on. The agent infrastructure layer is being built right now, and the incumbents are Anthropic, OpenAI, and AWS. But there is room for specialized providers, particularly in agent security, agent identity, and agent financial rails.

**AI-Native Professional Services.** Medvi proved the template. The next twelve months will see this model replicated across insurance, legal, accounting, recruiting, and consulting.

The enabling condition is the same in each case: a commoditized regulated back-end accessible via platform, combined with an AI-driven customer experience layer that captures value. The venture opportunity is not in funding these individual companies — they're cheap to start — but in funding the platform infrastructure they all depend on. The CareValidates and OpenLoop Healths of every regulated industry will be extraordinarily valuable.

**Defensive AI Security.** Project Glasswing represents the birth of a new market category. When offensive AI capabilities reach the level Mythos has demonstrated, defensive security becomes a capability that only the most advanced AI labs can provide.

This creates a natural oligopoly: the same organizations building the most powerful models are the only ones capable of defending against them. The implications for the cybersecurity industry are severe — legacy security vendors without frontier AI capability cannot compete in a world where attackers operate at machine speed. **Financial AI Platforms.

** Perplexity's Plaid integration is the opening move in what will become a major market category: AI-native financial management.

Never Miss an Episode

Subscribe on your favorite podcast platform to get daily AI news and weekly strategic analysis.