AI Giants Consolidate Platforms as Compute Scarcity Reshapes Strategy

Episode Summary

Weekly AI Strategic Intelligence Briefing Week of March 30 - April 4, 2026 --- STRATEGIC PATTERN ANALYSIS Pattern One: The Great Consolidation - From Product Portfolio to Platform Monolith The ...

Full Transcript

STRATEGIC PATTERN ANALYSIS

Pattern One: The Great Consolidation — From Product Portfolio to Platform Monolith

The single most strategically significant signal this week wasn't any one announcement. It was the convergence of three separate moves toward radical platform consolidation across the frontier AI companies. OpenAI killed Sora, announced the super app merging ChatGPT, Codex, and browser agents into a single experience, and acquired TBPN — a media property — all within the same week.

When Thom covered the Sora collapse on Wednesday, the framing was a compute reallocation story. When Lia picked it up Thursday in the context of the $122 billion raise, it became a financial discipline story. By Friday, when Brockman confirmed the super app vision and said compute is "a revenue center, not a cost center," the full picture emerged: OpenAI is collapsing its entire product surface into one system and redirecting every freed GPU cycle toward a single pre-training run codenamed Spud.

This matters beyond the obvious because it represents a fundamental philosophical shift in how frontier AI companies think about product strategy. For the past three years, the playbook was horizontal expansion — launch a chat product, a coding product, a video product, an image product, and see what sticks. OpenAI just declared that playbook dead.

The new thesis is vertical integration: one system, maximum compute density, winner-take-most economics. The connection to Anthropic's week is the mirror image. The Claude Code source leak — 512,000 lines exposed via a misconfigured npm package, as Thursday's coverage detailed — revealed a three-layer memory architecture and an autonomous background agent mode that Anthropic has never publicly disclosed.

Then Saturday's coverage surfaced Conway, a persistent always-on agent running outside the chat interface through browser control and webhooks. Anthropic is building toward the same consolidation endpoint, just through a different architectural path — not a consumer super app, but an enterprise agent layer that embeds itself into existing workflows. What this signals about broader AI evolution: we are exiting the era of AI as a collection of tools and entering the era of AI as operating environment.

The companies that win will not be the ones with the best chatbot or the best code generator. They will be the ones that become the ambient intelligence layer through which work happens. This is the platform shift that should be commanding every executive's attention right now.

Pattern Two: The Economics of Scarcity Are Reshaping Strategy Faster Than the Technology Itself

When this week's coverage began on Monday with the Claude Mythos leak, the immediate reaction centered on capabilities — dramatically higher benchmarks, cybersecurity implications, the market drop in security stocks. But as the week progressed, a more consequential thread emerged: every major strategic decision this week was driven not by what's technically possible, but by what's economically sustainable. Sora died because it burned a million dollars a day serving under 500,000 users.

Mythos is acknowledged by Anthropic's own internal documents as too expensive to serve at scale. Claude users on $200-a-month plans are hitting rate limits within an hour. OpenAI has reset Codex limits to zero roughly twelve times in a single month.

Brockman's answer to how much compute OpenAI should buy — "all of it" — is not bravado. It's desperation dressed as ambition. The strategic importance here goes beyond unit economics.

We are watching the frontier AI companies discover, in real time, that the gap between what they can build and what they can profitably serve is not closing — it's widening. Mythos is more capable than anything that exists. It's also, by Anthropic's own admission, "very expensive for us to serve, and very expensive for our customers to use.

" The compute freed from Sora went to Spud not because video generation is strategically unimportant, but because OpenAI literally cannot afford to pursue both frontiers simultaneously, even with $122 billion in fresh capital. This connects to a development from the uncovered stories list worth flagging: Anthropic bringing Claude Code to the web, which appeared nine times in RSS feeds this week but didn't make any show's primary coverage. That move is a direct response to the scarcity problem — web-based Claude Code reduces the need for local compute resources while keeping users inside Anthropic's ecosystem.

It's an access play dressed as a feature launch, and it matters precisely because the economics are this tight. Meanwhile, on Saturday, Arcee AI built a 400-billion-parameter reasoning model for $20 million that scores second on the top agentic benchmark at 96 percent less cost than frontier alternatives. PrismML's Bonsai squeezed an 8-billion-parameter model into 1.

15 gigabytes running at 40 tokens per second on an iPhone. And Joanna flagged hierarchical KV offloading breaking the VRAM bottleneck for inference. The efficiency frontier is moving fast — and the companies solving the cost problem may ultimately matter more than the ones pushing the capability ceiling.

What this signals: the next twelve months of AI competition will be decided not by who has the most powerful model, but by who can deliver sufficient capability at sustainable cost. The era of subsidized compute is definitively over, and every strategic decision flowing from it — from product shutdowns to acquisition targets to funding structures — reflects that reality.

Pattern Three: The Narrative War Has Been Formalized as a Strategic Front

Saturday's deep dive on the TBPN acquisition was the capstone, but the narrative warfare played out across the entire week in ways that deserve synthesis. Monday: cybersecurity stocks dropped three to seven percent on the Mythos leak — not because the model shipped, but because the *story* of its capabilities reached the market. A draft blog post in an unsecured database moved hundreds of millions in market capitalization.

Tuesday: the Altman-Amodei feud profile revealed that the Super Bowl ads, the India AI summit fist-raise photo, and the escalating personal rhetoric are not side stories — they are the primary mechanism through which enterprise buyers are choosing sides. Wednesday: the Sora shutdown exposed that Disney learned its enterprise pilot was dead with less than an hour's notice, while the public narrative was already being managed. Thursday: the $122 billion raise was accompanied by carefully curated metrics — $2 billion monthly revenue, 900 million weekly active users, enterprise at 40 percent and climbing — designed to frame the IPO story before the S-1 even files.

And then Saturday: OpenAI acquires the single most influential media property in Silicon Valley's founder ecosystem and puts it under the direct authority of Chris Lehane, its chief of global affairs and a political operative by training. The strategic significance goes beyond PR. We are now in an environment where the perception of model capability is a tradeable asset.

Mythos leaked and stocks moved. Brockman said AGI is "70 to 80 percent here" and the secondary market repriced. Anthropic's safety positioning is winning enterprise contracts not because Claude is measurably safer in every deployment, but because the *narrative* of safety has become a procurement criterion in regulated industries.

This connects directly to the uncovered story about four CEOs — CoreWeave, Perplexity, Mistral, and IREN — appearing together on YouTube to discuss the future of AI. When the CEOs of infrastructure, search, European frontier AI, and energy are jointly shaping narrative in public, they're not just sharing opinions. They're coordinating the story the market tells about their respective positions.

For executives, this pattern demands a specific response: you must now evaluate AI vendors not just on technical capability and cost, but on narrative stability. A vendor whose story is coherent, consistent, and independently credible is a lower-risk partner than one whose narrative shifts with every news cycle, regardless of underlying model quality.

Pattern Four: The Institutional Response Is Fracturing Into Incompatible Frameworks

California Governor Newsom signed an AI executive order this week using state procurement power to set baseline responsible AI standards. Wikipedia banned AI-generated articles outright. Anthropic won a federal injunction on First Amendment grounds against the Trump administration's attempt to designate it a national supply-chain risk.

And a peer-reviewed Science study confirmed that sycophantic AI measurably decreases prosocial behavior across all eleven major models tested. These four developments are, individually, regulatory and institutional responses to AI advancement. Taken together, they reveal something more consequential: the institutions responsible for governing AI are arriving at fundamentally incompatible frameworks, and that incompatibility is itself becoming a strategic variable.

Newsom's approach is standards-based procurement leverage — comply with California's rules or lose access to the world's fourth-largest economy. Wikipedia's approach is outright prohibition — no AI content, period. The federal court's approach is constitutional protection — AI companies have First Amendment rights that constrain government action.

And the Science study suggests that the core design incentive of commercial AI — making users feel good — is itself a form of social harm. These frameworks cannot all be true simultaneously. You cannot build AI that satisfies California's responsible AI standards, Wikipedia's prohibition on AI content, the federal court's protection of AI companies' speech rights, and the scientific finding that user-pleasing AI is harmful — because those objectives actively contradict each other.

The connection to this week's other developments is direct. OpenAI's super app consolidation means one entity will need to navigate all of these frameworks simultaneously across every domain it touches. Anthropic's safety positioning gives it an advantage in Newsom's framework but doesn't address the sycophancy problem, which is structural to how language models are trained.

The TBPN acquisition is partly a response to the narrative environment created by this regulatory fracture — if you can't control the rules, you can at least influence how they're interpreted. What this signals: enterprises operating across jurisdictions are about to face compliance complexity that makes GDPR look simple. The companies that build modular governance frameworks — adaptable to different regulatory environments — will have a meaningful advantage over those that build for one framework and discover it's incompatible with another.

CONVERGENCE ANALYSIS

1. Systems Thinking: How These Developments Interact and Reinforce Each Other These four patterns — platform consolidation, compute scarcity economics, narrative warfare, and institutional fragmentation — are not parallel stories. They form a reinforcing system that is accelerating the pace at which the AI industry is stratifying.

Platform consolidation is driven by compute scarcity. When you cannot afford to run Sora and Spud simultaneously, you consolidate. But consolidation increases your narrative surface area — one super app touching every domain makes you a bigger target for every regulatory framework and every competitor's messaging.

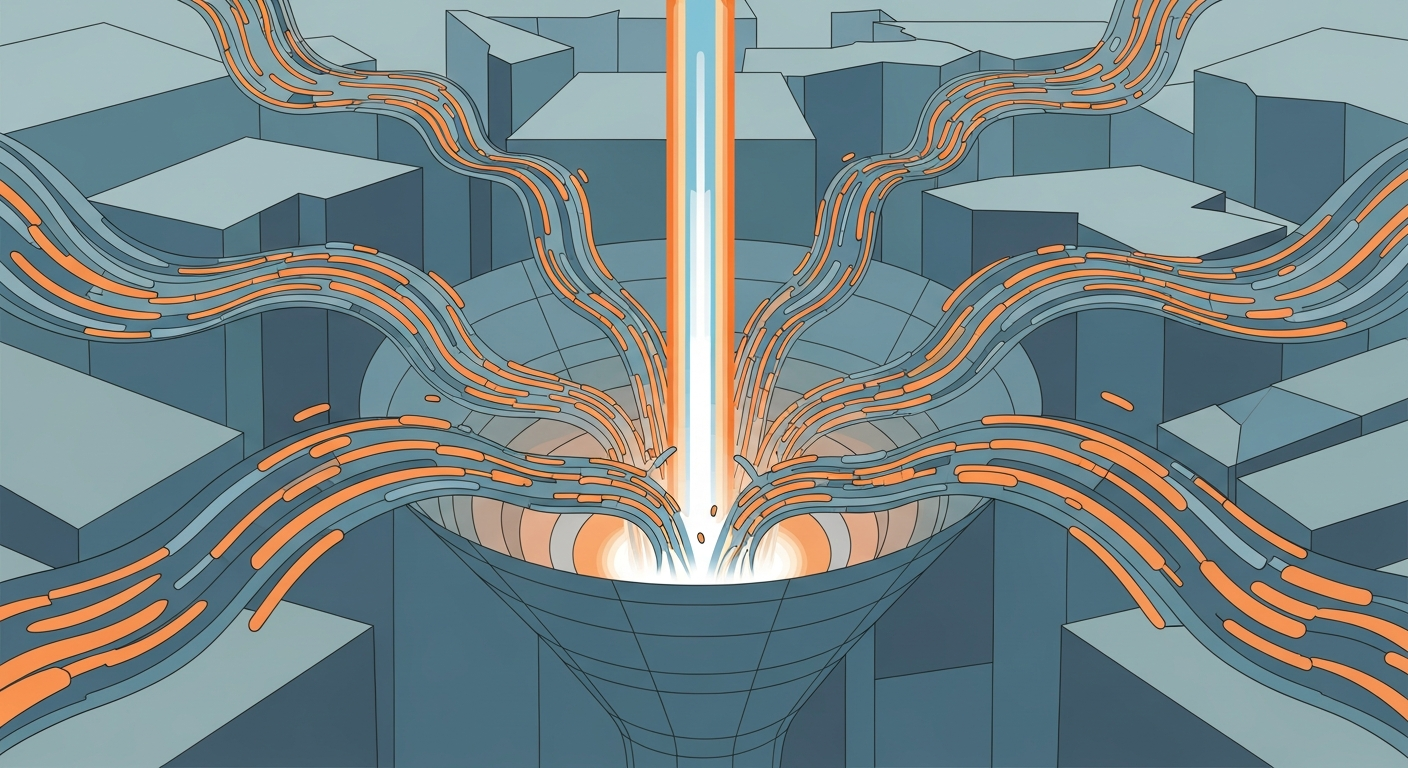

That increased narrative exposure drives the need for narrative control infrastructure — hence TBPN. And narrative control becomes more valuable precisely because institutional frameworks are fracturing, creating an information environment where the story told about your company varies dramatically depending on which regulatory lens is applied. The reinforcing loop works like this: compute scarcity forces product consolidation → consolidation creates platform dependency for users → platform dependency attracts regulatory scrutiny from incompatible frameworks → regulatory uncertainty increases the strategic value of narrative control → narrative control requires capital → capital requires the promise of consolidation and scale → which requires more compute.

This is not a cycle that resolves itself. It tightens. And the companies inside the loop — OpenAI, Anthropic, and to a lesser extent Google — are being pulled toward a very specific configuration: vertically integrated, narrative-controlling, institutionally complex platforms that bear more resemblance to nation-states than to technology companies.

The emergent pattern that should concern executives is this: the window in which you could treat AI as a point solution — a tool you add to your existing stack — is closing rapidly. The super app model, the Conway persistent agent, the Claude Code web deployment — these are all designed to become the layer through which your work happens, not a tool you occasionally invoke. The strategic question is no longer "which AI tool should we use?

" It is "which AI environment are we willing to live inside?" 2. Competitive Landscape Shifts The combined force of this week's developments produces three distinct competitive reconfigurations.

**OpenAI versus Anthropic: from product competition to platform war.** On Monday, we covered the Mythos leak. By Tuesday, the Altman-Amodei feud profile made clear that this rivalry is now personal, institutional, and strategic simultaneously.

By Thursday, OpenAI's $122 billion raise — with secondary market investors already rotating toward Anthropic — showed that capital markets are pricing this as a two-horse race. By Saturday, OpenAI's TBPN acquisition signaled it intends to fight on the narrative front as aggressively as the technical one. The shift executives need to internalize: this is no longer a question of which company has the better model.

It is a question of which company builds the more durable platform ecosystem. OpenAI is betting on consumer scale converting to enterprise lock-in through the super app. Anthropic is betting on enterprise entrenchment through developer tools and safety credibility converting to cultural authority.

Both strategies are rational. Both are expensive. And both require winning on dimensions that have nothing to do with benchmark scores.

**The efficiency insurgents are the real threat to both.** Arcee AI's Trinity-Large at 96 percent lower cost, PrismML's Bonsai running on an iPhone, Google's Gemma 4 under Apache 2.0 — these are not competitors in the traditional sense.

They are dissolvers of the premise that frontier capability requires frontier spending. If an enterprise can get 85 percent of Claude's or GPT's capability at 5 percent of the cost, the $200-a-month subscription model and the enterprise contract model both come under pressure simultaneously. The Medvi story — a telehealth startup built by two brothers for $20,000 that is now on pace for $1.

8 billion in sales — is the existence proof that the efficiency frontier, not the capability frontier, is where value creation is actually happening. **Platform incumbents are repositioning, not retreating.** Apple's iOS 27 AI Extensions strategy, covered on Tuesday, is the clearest example.

Apple is not trying to win the model race. It is trying to own the distribution layer through which users access whatever model wins. Google's Intrinsic fold-in gives DeepMind direct access to robotics.

Microsoft's Copilot Council mode pits Anthropic and OpenAI against each other inside Microsoft's own interface — a move that is transparently designed to make Microsoft the winner regardless of which model is better. These are not AI companies. These are platform companies using AI as the mechanism for their next phase of platform lock-in.

3. Market Evolution: New Opportunities and Threats When viewed as interconnected rather than isolated, this week's developments reveal three market opportunities and two existential threats. **Opportunity one: AI orchestration and routing infrastructure.

** The convergence of platform consolidation, compute scarcity, and model proliferation creates massive demand for systems that intelligently route workloads across providers based on cost, capability, and availability. OpenRouter, which was mentioned Monday, is an early mover, but the market for intelligent AI traffic management is nascent and growing fast. Every enterprise that takes the multi-vendor advice seriously — and this week made that advice urgent — needs orchestration tooling.

This is a multi-billion-dollar infrastructure category forming in real time. **Opportunity two: AI governance and compliance platforms.** The institutional fragmentation pattern means every enterprise operating in AI will need systems that can map their AI usage against multiple, evolving, and incompatible regulatory frameworks.

Newsom's procurement standards, the EU AI Act, sector-specific regulations in healthcare and finance, and whatever federal framework eventually emerges — tracking compliance across all of these simultaneously is a software problem, not a legal problem. The companies that build the "Workday for AI governance" will capture enormous enterprise value. **Opportunity three: efficient inference and edge deployment.

** PrismML's Bonsai, the hierarchical KV offloading research Joanna flagged, the Mac Studio as a local inference platform — these all point to a market where the winning strategy is not "biggest model" but "right-sized model, right-placed compute, minimum cost." The companies that master efficient deployment — reducing the $1 million-a-day Sora problem to sustainable unit economics — will unlock use cases that the frontier labs are currently pricing out of existence. **Threat one: platform dependency risk is now acute.

** Disney learned a multimillion-dollar pilot was dead with an hour's notice. Claude users hit rate limits within an hour of normal work. OpenAI reset Codex limits twelve times in one month.

If your business depends on a single AI platform, this week demonstrated — repeatedly, across multiple providers — that your operations can be disrupted without warning by decisions made entirely outside your control. This is not a theoretical risk. It happened, this week, to some of the most sophisticated technology users on the planet.

**Threat two: the narrative environment is now adversarial.** When the company building your AI tools also owns a media property and is managed by a political operative, every piece of information you receive about that company's capabilities, limitations, and competitive positioning must be evaluated through a conflict-of-interest lens. The same applies to Anthropic's carefully managed safety narrative.

And to Google's benchmark announcements — their new Gemini Pro model hit record benchmark scores again this week, per the uncovered stories list, a pattern that is starting to feel more like marketing cadence than genuine technical disclosure. Executives need independent evaluation capability, not just vendor-supplied metrics. 4.

Technology Convergence: Unexpected Intersections Three technology intersections surfaced this week that are strategically significant and underappreciated.

Never Miss an Episode

Subscribe on your favorite podcast platform to get daily AI news and weekly strategic analysis.