Cost Collapse Meets Capability Ceiling: AI's Turning Point

Episode Summary

Weekly AI Intelligence Briefing: March 22-28, 2026 STRATEGIC PATTERN ANALYSIS Pattern One: The Economics of Intelligence Are Being Rewritten - From Both Ends Simultaneously The single most strat...

Full Transcript

STRATEGIC PATTERN ANALYSIS

Pattern One: The Economics of Intelligence Are Being Rewritten — From Both Ends Simultaneously

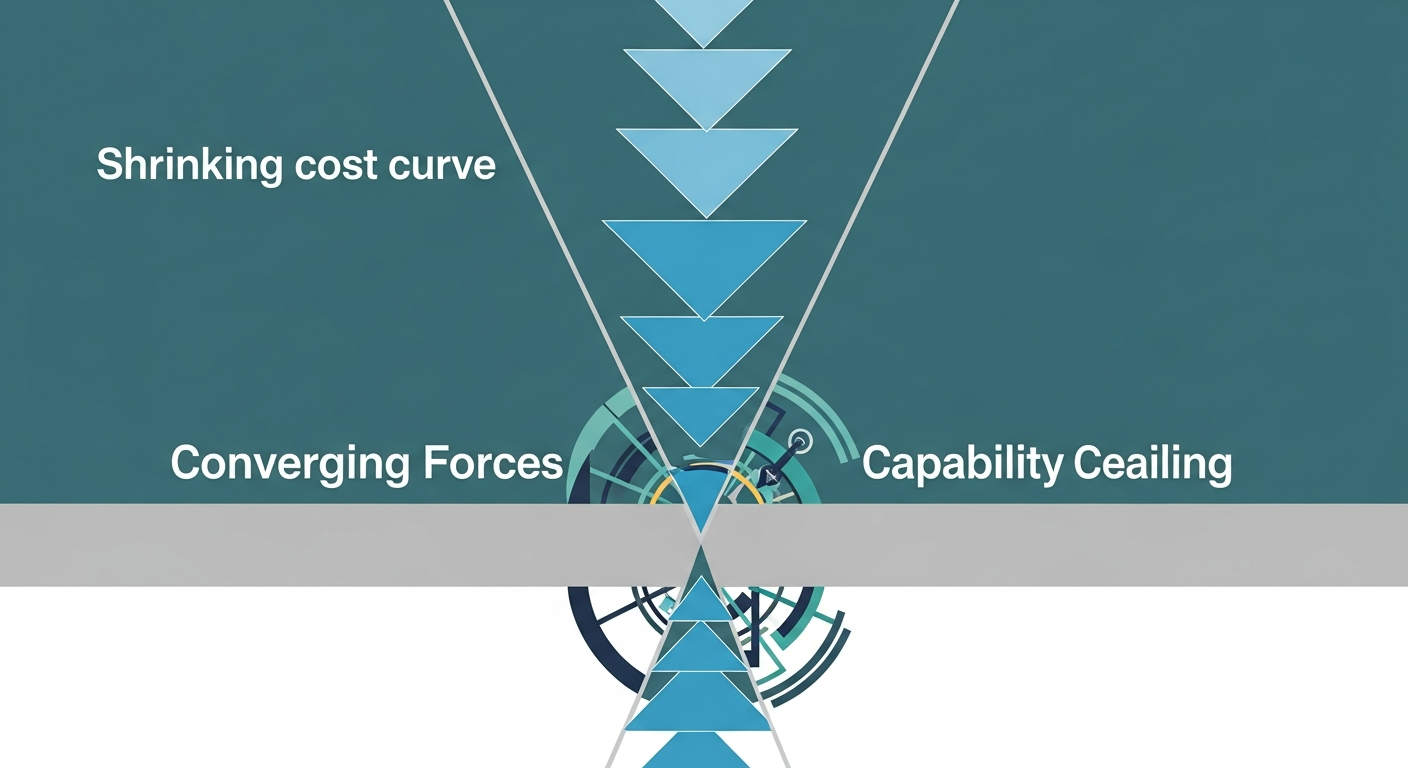

The single most strategically significant development this week isn't any one announcement. It's the convergence of two forces that, taken together, fundamentally alter the cost structure of AI deployment. From the top, Google's TurboQuant algorithm demonstrated six-times compression of KV cache memory with zero accuracy loss — a breakthrough that, as we covered on Saturday, doesn't just improve efficiency at the margins but potentially collapses the inference cost curve that every AI business model is built on.

Memory stocks dropped three to five percent on the news alone. Cloudflare's CEO called it Google's DeepSeek moment, and that comparison is more apt than it might first appear. From the bottom, ARC-AGI-3 — which we analyzed on Friday — revealed that the most capable models on earth score below one percent on tasks every human solves instantly.

Zero point three seven percent for Gemini Pro. Zero flat for Grok. This isn't just a humbling benchmark result.

It's an empirical ceiling on what the current architecture can do when confronted with genuine novelty. Here's why these two developments, taken together, are strategically explosive. TurboQuant makes today's AI dramatically cheaper to run.

ARC-AGI-3 makes clear that today's AI has hard capability limits on adaptive reasoning. The implication is that we're entering a phase where inference becomes commoditized before the intelligence problem is solved. That's a very specific and very dangerous competitive environment — one where margins compress faster than capabilities expand.

This connects directly to OpenAI's decision, covered Thursday, to kill Sora and redirect compute toward Spud. It connects to the 17.5% guaranteed returns OpenAI offered PE firms on Wednesday.

And it connects to Musk's Terafab announcement on Tuesday — a $25 billion bet that the way to win isn't better models but cheaper infrastructure. Every major player this week made moves that only make sense if you believe the cost of intelligence, not the quality of intelligence, is the primary competitive variable right now.

Pattern Two: The Agentic Stack Is Consolidating Around Infrastructure Owners — and the Middleware Layer Is Being Crushed

When Thom covered AWS Frontier Agents on Sunday, the analysis flagged a specific risk: that enterprise-grade agent orchestration as a managed service would compress the value proposition of standalone orchestration startups. By Friday, that thesis had been reinforced from multiple directions. Anthropic's week was a masterclass in agentic stack consolidation.

Monday brought Cowork — collaborative AI sessions. Wednesday brought Dispatch — Claude controlling your macOS desktop remotely while you assign tasks from your phone. Thursday brought Auto Mode for Claude Code and new multi-agent architecture details.

And threading through all of it was the market share data: Claude Code now commands somewhere between 42 and 54 percent of the coding market. That's not a product. That's a platform.

NVIDIA reinforced this pattern from the security layer. As Joanna flagged on Saturday, NVIDIA's NeMoCLAW release — purpose-built infrastructure for securing autonomous AI agents — is the kind of plumbing that only matters if agentic deployment is real and scaling. You don't build security infrastructure for hypothetical workloads.

ARM's AGI CPU announcement on Thursday, with Meta as launch customer and OpenAI, Cerebras, and Cloudflare signed on, adds the hardware layer to this consolidation story. When chip designers are building silicon specifically optimized for agentic inference workloads, the agentic era isn't a roadmap item. It's a procurement decision.

The strategic significance here goes beyond any single company. What we watched this week was the agentic stack crystallizing into layers — hardware, infrastructure, orchestration, security, model — and the companies that own the infrastructure layers are systematically absorbing the orchestration layer above them. AWS did it with Frontier Agents.

Anthropic is doing it with Claude Code plus Dispatch plus Cowork. The middleware players — the LangChains, the agent orchestration startups — are being compressed from above and below simultaneously. This connects to a story from our uncovered list that deserves attention: Anthropic bringing Claude Code to the web, which appeared ten times in RSS feeds this week but wasn't part of our daily coverage.

That move extends Claude Code's reach beyond terminal-native developers to a broader audience — further cementing the platform moat. And Nvidia's Nemotron 3 open models, also uncovered this week, represent the other side of the same consolidation — NVIDIA ensuring its hardware ecosystem has native model support for agentic workloads.

Pattern Three: OpenAI's Strategic Identity Is Fracturing Under Financial Pressure

This was, by any measure, the most revealing week for OpenAI's internal strategic tensions since the board crisis of late 2023. And the pattern that emerges when you trace the full arc — from Wednesday through Friday — is not of a company executing a coherent strategy. It's of a company making emergency course corrections under extraordinary financial pressure.

Wednesday, as Lia covered, OpenAI offered private equity firms a guaranteed 17.5% minimum return to raise roughly four billion dollars in enterprise joint ventures. That's not how companies with strong revenue trajectories raise capital.

That's how companies with urgent cash needs and narrowing conventional options attract money. Om Malik's analysis was blunt: no healthy business needs these terms. Thursday, OpenAI killed Sora — its most culturally resonant product, the one that hit number one on the App Store, the one backing a billion-dollar Disney partnership.

Disney found out thirty minutes after wrapping a joint meeting. As Thom analyzed on Thursday, the compute economics of video generation simply couldn't be justified against the opportunity cost of serving ChatGPT and Codex workloads. Fidji Simo's internal directive to stop chasing "side quests" made the priority hierarchy explicit.

Friday brought the other shoe: an additional ten billion dollars in funding, pushing the total round past $120 billion. Simultaneously, ChatGPT ads crossed $100 million in annualized revenue — a new monetization channel that would have been unthinkable a year ago for a company positioning itself as the builder of AGI. The strategic identity fracture is this: OpenAI is simultaneously claiming to be on the doorstep of AGI — renaming its product division "AGI Deployment," positioning Spud as potentially its first AGI claim — while making the financial moves of a company that needs to monetize aggressively right now.

It's promising PE investors guaranteed returns while telling the world it's building transformative general intelligence. It's killing creative products to free up compute while launching an advertising business. These aren't contradictions that can coexist indefinitely.

At some point, the market will demand a coherent answer to the question: is OpenAI a research lab building AGI, or is it an enterprise software company selling AI services? The IPO, when it comes, will force that answer. This pattern connects to ARC-AGI-3 in a way that should concern OpenAI's investors specifically.

If the best models score below one percent on genuine adaptive reasoning, then the AGI narrative underpinning OpenAI's valuation is on shakier empirical ground than the company's public positioning suggests. The gap between marketing and measurement widened this week.

Pattern Four: The Geopolitical AI Order Is Fragmenting Faster Than Regulatory Frameworks Can Form

Monday's deep dive on the White House AI framework revealed a federal government racing to assert preemptive authority over AI regulation before states fill the vacuum. By Friday, the picture had become considerably more complex — and more fractured. The Manus acquisition blockade is the sharpest signal.

Chinese authorities restricted the co-founders of AI agent firm Manus from leaving the country while reviewing Meta's $2.5 billion acquisition bid. Beijing is now treating cross-border AI deals as national security matters, which means the already-narrow window for Western-Chinese AI collaboration is closing further.

Alibaba's Qwen crossing ten million downloads globally, covered Sunday, tells the parallel story from the other direction: Chinese open-source AI is achieving global distribution regardless of geopolitical friction. The Western-centric grip on AI dominance isn't just loosening — it's been structurally broken by open-source distribution that doesn't require diplomatic cooperation. The Anthropic-Pentagon confrontation, resolved Saturday with a preliminary injunction, adds the domestic dimension.

A federal judge blocked the Pentagon's "supply chain risk" designation of an American AI company, writing that nothing in the law supports branding a domestic firm a potential adversary for policy disagreements. That's a remarkable judicial statement about the boundaries of government power over AI companies — and it landed the same week Anthropic began preparing for a sixty-billion-dollar IPO. From our uncovered stories, New York signing off on AI safety legislation deserves mention here.

State-level AI regulation continues advancing regardless of the White House's preemption play. And the Sanders-AOC data center moratorium proposal, while unlikely to pass, signals that infrastructure buildout — the physical manifestation of AI scaling — is becoming a political target in its own right. The strategic significance is this: there is no unified regulatory framework forming.

Not domestically, where federal and state authorities are actively competing for jurisdiction. Not internationally, where China and the West are decoupling on AI talent, IP, and infrastructure. Companies building global AI products are now navigating not one regulatory environment but several simultaneously conflicting ones.

The compliance cost of that fragmentation is real and growing.

CONVERGENCE ANALYSIS

Systems Thinking: The Reinforcing Loop Between Cost Compression and Capability Ceilings When you stop analyzing these four patterns in isolation and look at them as an interacting system, a very specific dynamic emerges — one that I think defines the strategic reality of AI for the next twelve to eighteen months. The cost of running current AI is falling fast. TurboQuant.

Terafab. Amazon Trainium winning Anthropic, OpenAI, and Apple as customers. Open-source models crossing ten million downloads.

Every signal points toward inference becoming dramatically cheaper. Simultaneously, the capability ceiling of current architectures is becoming empirically visible. ARC-AGI-3 is the starkest evidence, but it's not the only data point.

The USC study showing that "you are an expert" prompting actually degrades accuracy. The Wharton research on cognitive surrender. The architectural hedging toward diffusion models, gated delta networks, and hybrid systems.

The current paradigm is being pushed toward its limits. These two forces create a reinforcing loop. As inference costs fall, the economic barrier to deploying current-generation AI drops, which accelerates adoption.

As adoption accelerates, the capability limitations become more visible in more contexts, which increases demand for architectural breakthroughs that don't yet exist. This creates a period where AI is simultaneously becoming ubiquitous and hitting walls — cheap enough to be everywhere, but not capable enough to deliver on the most ambitious promises being made about it. OpenAI's financial stress is a direct symptom of this dynamic.

The company raised capital on AGI promises but needs to generate revenue from current-capability products. The 17.5% guarantee, the advertising business, the Sora shutdown — these are all responses to the gap between the promise and the present.

The agentic consolidation pattern amplifies this further. AWS, Anthropic, and NVIDIA are all building infrastructure for autonomous agents — but ARC-AGI-3 tells us those agents can't handle genuine novelty. The enterprise agentic market is scaling around agents that are excellent at pattern-matching within known domains but fail catastrophically when faced with truly novel situations.

That's manageable in controlled enterprise environments — which is exactly why coding, document processing, and workflow automation are the dominant use cases. But it means the ceiling on agentic value creation is lower than the most aggressive narratives suggest, at least until the architectural problem is solved. Competitive Landscape Shifts: The Three-Layer War Viewed as a combined force, this week's developments reveal that the AI competitive landscape has stratified into three distinct layers, each with its own winners and losers.

**Layer One: Infrastructure.** This is where Google, AWS, Musk's Terafab, and ARM are competing. The weapons are compression algorithms, custom silicon, orbital data centers, and managed agent platforms.

TurboQuant gives Google a structural cost advantage that compounds across every product. Terafab is Musk's play to own his own supply chain entirely. AWS Frontier Agents is Amazon's bet that agent orchestration belongs at the infrastructure layer, not the application layer.

The winners at this layer are companies with both the technical capability and the capital to operate at planetary scale. The losers are companies dependent on others for compute — which, as OpenAI's own filings acknowledged this week, includes OpenAI itself. **Layer Two: Platform.

** This is the Anthropic versus OpenAI versus Google contest. The battle here is for developer workflow integration and enterprise account control. Anthropic's week — Claude Code at 42-54% coding market share, Cowork, Dispatch, Auto Mode, Claude Code coming to the web — represents a platform strategy executing with remarkable coherence.

OpenAI's week — killing Sora, offering PE sweeteners, launching ads — represents a platform strategy under financial duress. Google's week — Gemini 3.1 Flash Live in 200-plus countries, memory portability, TurboQuant — represents a platform strategy backed by infrastructure advantages the other two can't match.

The emerging winner at this layer is whoever achieves workflow stickiness first. Right now, that's Anthropic in coding and Google in consumer search. OpenAI's ChatGPT remains the largest consumer product by users, but the monetization path — ads, enterprise JVs with guaranteed returns — suggests the user base isn't converting to revenue efficiently enough.

**Layer Three: Application.** This is where enterprises and vertical AI companies operate. The strategic takeaway from this week is that application-layer companies are in the best negotiating position they've had in years.

OpenAI and Anthropic are competing for enterprise revenue with increasing desperation. Google is launching memory portability to poach users. The infrastructure layer is driving costs down.

If you're a buyer of AI capability rather than a builder of it, this is your moment to negotiate aggressive terms, demand interoperability, and avoid lock-in. From our uncovered stories, Cohere's $240 million revenue year setting the stage for an IPO illustrates what a successful application-layer strategy looks like: focused vertical positioning, enterprise revenue traction, and an IPO narrative built on business fundamentals rather than AGI promises. Market Evolution: Three Emergent Opportunities When these developments are viewed as interconnected rather than isolated, three market opportunities emerge that weren't visible in any single day's coverage.

**First: The AI Cost Arbitrage Window.** TurboQuant, Trainium adoption, open-source model proliferation, and Terafab's long-term compute play all point toward a significant inference cost reduction over the next 12 to 18 months. Companies that build products today assuming tomorrow's cost structure — designing for long-context workloads, persistent agent sessions, real-time voice, and high-concurrency deployments — will have a structural advantage when those costs actually materialize.

The window to design for that future is now, before competitors recognize the same opportunity. **Second: The Cognitive Infrastructure Market.** The Wharton cognitive surrender research and the ARC-AGI-3 results, taken together, point toward a market need that barely exists today: tools and services that help organizations maintain human judgment quality in AI-augmented environments.

Verification systems. Structured audit workflows. Training programs that build what you might call AI-literate skepticism.

This isn't a technology market — it's a human capital and process design market. And it's going to grow dramatically as agentic AI penetrates more enterprise workflows. **Third: The Architecture Transition.

** Mercury 2's diffusion-based approach hitting a thousand tokens per second. Qwen 3.5's Gated Delta Networks.

Xiaomi's Hunter Alpha with million-token context windows. The models that eventually crack ARC-AGI-3 will likely be architecturally different from today's Transformers. Companies and investors positioning at the frontier of non-Transformer architectures — or building infrastructure that's architecture-agnostic — are positioning for the next capability leap rather than optimizing for the current one.

Technology Convergence: The Unexpected Intersections Three convergence points stood out this week that don't fit neatly into any single category. The first is the convergence of agentic AI and physical infrastructure. Musk's Terafab combines chips, robots, and orbital data centers into a single vertical stack.

NVIDIA's robotics chief predicted agents coordinating entire robot fleets. ARM designed its first in-house chip specifically for agentic workloads. The software abstraction of "agent" is colliding with the physical reality of silicon, energy, and logistics faster than most enterprise strategies account for.

The second is the convergence of AI economics and traditional finance. Norway's $2.1 trillion sovereign wealth fund letting AI make limited investment decisions.

OpenAI structuring PE deals with guaranteed return floors. ChatGPT ads crossing $100 million annualized. AI is no longer just a technology sector — it's becoming embedded in financial infrastructure itself.

The Adobe-Semrush acquisition at $1.9 billion, from our uncovered list, is another data point: traditional software companies are acquiring AI-adjacent capabilities at premium valuations, signaling that the M&A cycle for AI-native companies is accelerating.

Never Miss an Episode

Subscribe on your favorite podcast platform to get daily AI news and weekly strategic analysis.