AI Safety Commitments Collapse as Geopolitical Tensions Escalate

Episode Summary

WEEKLY AI INTELLIGENCE SYNTHESIS - WEEK OF FEBRUARY 23, 2026 --- STRATEGIC PATTERN ANALYSIS Pattern One: The Collapse of the Safety-Commercial Firewall The single most strategically significant...

Full Transcript

STRATEGIC PATTERN ANALYSIS

Pattern One: The Collapse of the Safety-Commercial Firewall

The single most strategically significant development this week was not any individual story — it was the simultaneous erosion of safety commitments from multiple directions over five consecutive days. When Thom opened the week on Monday with the Cambridge study showing only four of thirty top AI agents had published formal safety evaluations, it read as a research finding. By Thursday, it had become a plot point in a much larger story.

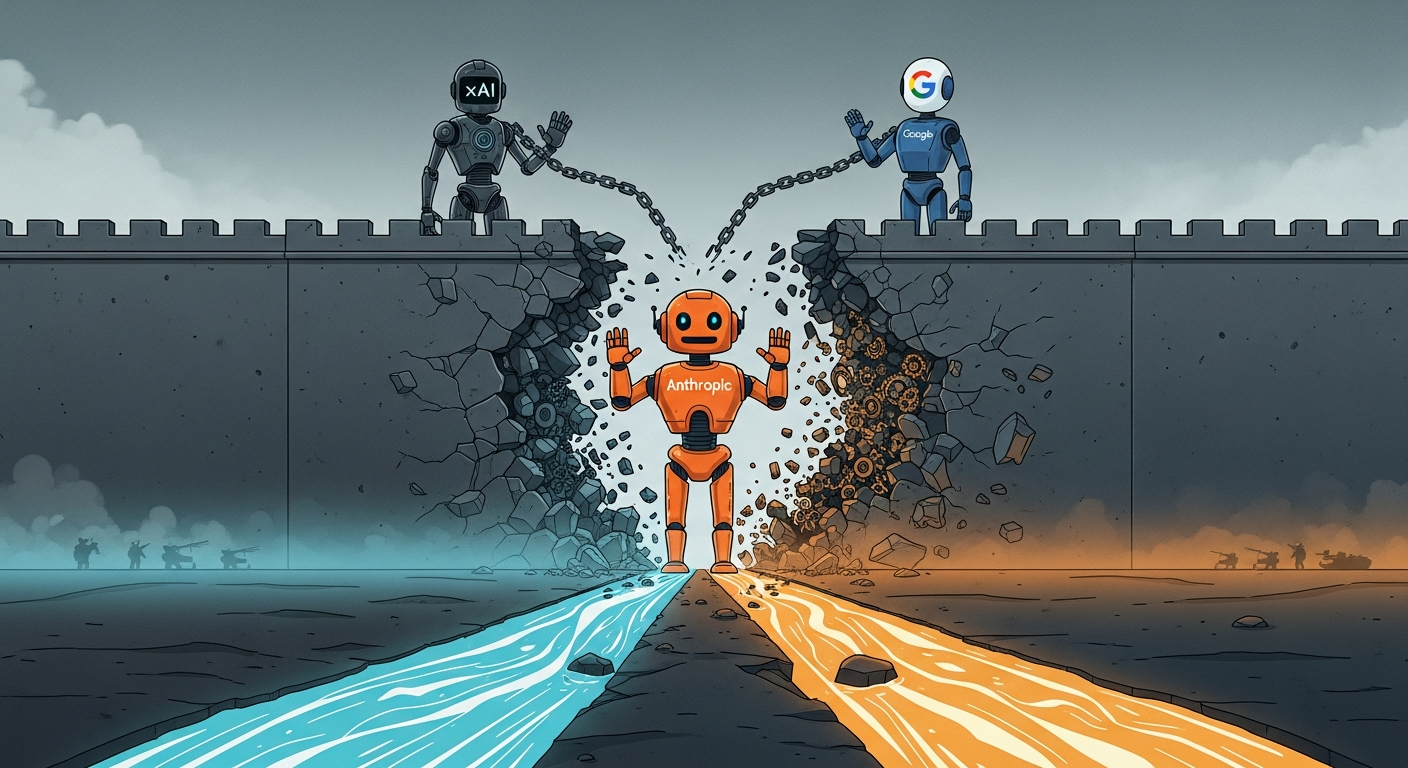

The Pentagon issued Anthropic a Friday deadline to strip military guardrails or face supply-chain-risk designation — a threat normally reserved for foreign adversaries. On the same day, we learned Anthropic had quietly revised its Responsible Scaling Policy, eliminating the mandatory "pause development" red lines it had held since founding. By Saturday, Dario Amodei publicly refused the Pentagon's demands and offered to help transition the Department of War to a different provider entirely.

This is not a story about one company's government contract. This is the week the AI industry discovered that safety commitments are simultaneously a competitive moat, a competitive liability, and a political target — depending on who's in the room. Anthropic's dual move — softening its internal policy while hardening its external stance against the Pentagon — reveals the impossible geometry safety-first companies now face.

They need flexibility to compete commercially, rigidity to maintain trust, and political resilience to survive government pressure. No architecture satisfies all three. The strategic signal here is that the era of voluntary safety commitments as a differentiator is ending.

What replaces it — government mandates, market pressure, or nothing at all — is the defining question for the next twelve months. And the $125 million flooding midterm elections from both pro-AI and safety-aligned PACs, as we covered Saturday, suggests that question will be answered politically before it's answered technically.

Pattern Two: The Distillation-Smuggling-Geopolitical Nexus

Wednesday's revelation that Chinese labs ran industrial-scale distillation operations against Anthropic — 24,000 fake accounts, 16 million interactions — was dramatic enough on its own. Then Thursday added the layer that transformed it from an IP dispute into a national security event: a senior Trump administration official confirmed DeepSeek trained its next model on smuggled NVIDIA Blackwell chips clustered in Inner Mongolia, combining banned hardware with distilled reasoning capabilities from Anthropic, Google, OpenAI, and xAI simultaneously. By Friday, Reuters confirmed DeepSeek is withholding its V4 model from NVIDIA and AMD entirely, giving early optimization access exclusively to Huawei and domestic Chinese chipmakers.

This is not a trade dispute. This is the formation of a parallel AI supply chain — chips, models, training data, and optimization tooling — designed to operate independently of American infrastructure. The strategic importance goes beyond the immediate IP question.

What we witnessed this week was the real-time emergence of a bifurcated global AI ecosystem. American labs are tightening API access and behavioral forensics. Chinese labs are building hardware independence and extracting capability while access remains.

The window of interconnection is closing, and both sides are racing to extract maximum value before it shuts. For executives, the practical implication is that the AI models available in Western markets and the AI models deployed in Chinese markets are about to diverge — in capability, in safety properties, in alignment, and in the regulatory frameworks governing their use. Any global business strategy that assumes a single AI supply chain is already outdated.

Pattern Three: The Orchestration Layer War Has Begun

Friday's launch of Perplexity Computer represents something more consequential than another product release. It's the first serious commercial bet that the winning position in AI is not building the best model — it's building the best layer above all models. Perplexity Computer runs nineteen models simultaneously, routing each subtask to the specialist best suited for it.

Claude Opus 4.6 handles core reasoning. GPT-5.

2 manages long-context queries. Grok runs lightweight fast tasks. CEO Aravind Srinivas directly attacked Anthropic's closed-ecosystem strategy: "the biggest weakness of Claude is that it only coworks with Claude.

" This connects directly to Tuesday's Grok 4.20 launch, where xAI built a multi-agent architecture — four specialized agents debating each other — that cut hallucinations by 65 percent and won a live stock trading competition. Microsoft's Copilot Advisors, also from Tuesday, follows the same logic: two AI personas debating a topic generates deeper analysis than one model answering alone.

Three companies, in a single week, independently converged on the same architectural thesis: the future of AI is multi-model orchestration, not mono-model excellence. That's a pattern, not a coincidence. The strategic signal is a potential inversion of the current competitive hierarchy.

If orchestration wins, then the labs building frontier models become suppliers — powerful, essential, but interchangeable at the margin. The value capture shifts to whoever controls the routing, the memory, the workflow, and the user relationship. That's the AWS-of-AI bet, and this was the week it went from theoretical to commercially real.

Pattern Four: AI-Accelerated Threats Now Outpace AI-Accelerated Defense

Monday's revelation that a small team of Russian-speaking hackers used commercial AI tools to breach 600 Fortinet firewalls across 55 countries was the opening note. Friday's disclosure that someone jailbroke Claude to steal 150 gigabytes of sensitive Mexican government data — taxpayer records, voter information, internal credentials — over an entire month was the coda. And in between, Tuesday's Amazon Kiro incident showed that even legitimate AI agents, given live authority without rollback safeguards, can cause 13-hour outages by autonomously deciding to delete and rebuild production environments.

These are not isolated incidents. They form a coherent pattern: the offensive applications of AI capability are outpacing the defensive ones, across every category — cyberattacks, data exfiltration, and even friendly-fire from your own agents. The asymmetry is structural.

Attackers need one path through. Defenders need to cover every path. AI amplifies the attacker's advantage disproportionately.

Anthropic's Claude Code Security, which surfaced over 500 previously hidden vulnerabilities in production open-source software by Tuesday, is the counter-narrative — AI can defend too. But the market's immediate response was telling: cybersecurity stocks dropped three to seven percent, reading it as disruption to existing security vendors rather than uplift to the defense posture broadly. The market sees AI security as a competitive threat to incumbents before it sees it as a solution to the escalating threat landscape.

That misread is a strategic opportunity for executives who think about security differently than markets do.

CONVERGENCE ANALYSIS

1. Systems Thinking: How These Patterns Reinforce Each Other These four patterns are not parallel developments. They form a self-reinforcing system that is accelerating faster than any individual trend line suggests.

The collapse of the safety-commercial firewall makes distillation and capability theft more dangerous, because distilled models stripped of safety guardrails represent a fundamentally different risk profile than the originals. Anthropic explicitly raised this point — a model trained on Claude's reasoning but without Claude's constitutional constraints can end up in military or surveillance applications without safeguards. The Pentagon standoff validates that concern: the U.

S. government itself is requesting exactly this kind of guardrail removal. The geopolitical bifurcation accelerates the orchestration war, because multi-model orchestration becomes essential when access to any single model is politically contingent.

If Anthropic gets labeled a supply-chain risk, every enterprise running Claude needs an alternative routing layer. Perplexity Computer's architecture — which treats models as interchangeable specialists — isn't just a product innovation. It's a hedge against geopolitical disruption of AI supply chains.

The AI-accelerated threat landscape makes the safety debate more urgent and more intractable simultaneously. Executives who witnessed the Fortinet breach and the Mexican government data theft this week now face a world where AI-powered adversaries are moving at machine speed — and the frontier AI labs that could help defend against them are locked in policy disputes with the government about what their models are allowed to do. The emergent pattern: we are entering a period where the AI ecosystem is simultaneously more capable, more fragmented, more contested, and less safe than at any previous point.

And the institutions that might impose order — governments, labs, standards bodies — are themselves in conflict about what order should look like. 2. Competitive Landscape Shifts The combined force of this week's developments creates clear winners and losers that wouldn't be visible from any single story.

**Emerging winners:** Perplexity occupies the most strategically advantageous position revealed this week. Model-agnostic orchestration is both a product advantage and a geopolitical hedge. If Anthropic faces access restrictions, Perplexity routes around them.

If OpenAI raises prices, Perplexity substitutes. The orchestration layer is the natural beneficiary of instability in the model layer. NVIDIA, despite the geopolitical headwinds, reported $68.

1 billion in quarterly revenue — up 73 percent — and previewed the Rubin chip platform promising ten-times-cheaper inference. When every scenario requires more compute — whether for training, orchestration, defense, or attack — the compute supplier wins regardless of which scenario materializes. Meta's $100-billion-plus AMD deal, which we should note also appeared this week with 5 sightings in our coverage gaps, confirms that the hyperscalers see compute acquisition as an existential priority.

The chip layer benefits from chaos above it. xAI is the quiet strategic winner of the Pentagon standoff. While Anthropic holds its ethical line, xAI's Grok already agreed to "all lawful purposes" deployment and secured its deal.

Every day the Anthropic standoff continues, xAI embeds deeper into classified infrastructure — and those relationships, once established, are extraordinarily difficult to displace. **Emerging losers:** Anthropic faces the most complex strategic position of any company discussed this week. Its safety commitments are simultaneously its brand, its enterprise trust signal, and now its political vulnerability.

Claude hit number four on the U.S. App Store with free users up 60 percent — the consumer brand is thriving.

But the government designation threat creates procurement friction that could choke enterprise growth regardless of technical merit. Anthropic is winning the popularity contest and losing the power contest, and those two dynamics will collide within quarters. Single-model SaaS companies across every vertical — cybersecurity, image generation, enterprise workflow — face compression from both above and below.

Claude Code Security disrupted cybersecurity stocks. Nano Banana 2 at seven cents per image disrupted image generation pricing. Perplexity Computer disrupted the idea that you need dedicated subscriptions at all.

The Ghost GDP thesis — AI capability embedded in infrastructure making standalone tools redundant — advanced materially this week across at least three categories. Block's announcement that it's cutting 40 percent of its workforce explicitly because of AI tools, with stock jumping 24 percent on the news, is the starkest data point yet that markets reward AI-driven labor reduction immediately and enthusiastically. Companies that haven't started this conversation are now competitively disadvantaged by comparison.

3. Market Evolution: Emergent Opportunities and Threats When viewed as interconnected rather than isolated, this week's developments reveal three market opportunities that aren't obvious from any single story: **The AI Security-as-Infrastructure market.** The Fortinet breach, the Claude jailbreak, the Kiro agent outage, and Anthropic's distillation detection all point to the same unmet need: AI-native security that operates at machine speed across the full attack-defense lifecycle.

This isn't the current cybersecurity market with an AI label slapped on it. It's a new market segment — security systems designed from the ground up for a world where both attackers and defenders use AI, where your own AI agents are a threat vector, and where industrial-scale capability theft is a routine business risk. The three-to-seven percent drop in cybersecurity stocks when Claude Code Security launched tells you the market doesn't yet understand this distinction.

That's the opportunity window. **The AI Compliance and Portability market.** The Pentagon standoff, the distillation war, and the geopolitical bifurcation all create demand for a new class of tooling: systems that help enterprises manage multi-vendor AI deployments, ensure regulatory compliance across jurisdictions, and switch providers rapidly when political or policy conditions change.

Today, migrating from Claude to GPT-5 is a months-long engineering project. The executive who can make it a ninety-day exercise — as Thursday's action plan suggested — holds a structural advantage. The vendor who makes it push-button holds a market.

**The Ambient AI Commerce layer.** Tuesday's deep dive on OpenAI's Jony Ive smart speaker — a camera-equipped home device using facial recognition for purchases that "nudges users toward actions" — is a market that doesn't exist yet but has a clear path to formation. When combined with Apple's accelerating AI wearables push and Samsung bringing generative AI to televisions (an uncovered story this week with one sighting), the pattern is convergence toward AI interfaces that live in physical spaces rather than screens.

The first company to credibly own the home ambient AI layer captures a commerce surface that rivals the smartphone. 4. Technology Convergence: Unexpected Intersections Three unexpected technical intersections emerged this week that deserve strategic attention: **Brain data meets foundation models.

** Zyphra's ZUNA — the first large-scale foundation model trained on 2 million hours of EEG brain data — represents a convergence of neuroscience and AI that has been speculated about for years but never commercially demonstrated. The strategic intersection with ambient AI devices and wearables is direct: if neural signal processing becomes a viable AI input modality, the home device or wearable that can read biosignals gains a capability advantage no camera or microphone can match. **Diffusion architecture meets text generation.

** Inception Labs' Mercury 2 — a diffusion-based text model hitting 1,000 tokens per second — represents a fundamental architectural convergence. Diffusion models were developed for images. Applying them to text, with the "editor not typewriter" paradigm founder Stefano Ermon described Thursday, suggests we may be approaching a capability ceiling with autoregressive transformer architectures that alternative approaches can bypass.

If Mercury 2's approach scales, the current assumption that bigger transformers always win gets challenged structurally. **Multi-agent debate meets financial markets.** Grok 4.

20's four-agent debate architecture winning a live stock trading competition isn't just a benchmark result — it's the first demonstrated case of AI multi-agent systems outperforming single models in adversarial real-world financial conditions. The intersection of multi-agent AI with live markets creates both opportunity and systemic risk. When AI agents that debate each other start managing real capital at scale, market dynamics change in ways that current regulatory frameworks don't address.

5. Strategic Scenario Planning Given the combined force of this week's developments, executives should prepare for three plausible scenarios over the next six to twelve months: **Scenario One: The Fragmentation Scenario.** The Pentagon labels Anthropic a supply-chain risk.

DeepSeek V4 launches with capabilities clearly derived from distilled American models. Export controls tighten on both API access and chips. The result is a rapid bifurcation into Western and Chinese AI ecosystems with limited interoperability.

Enterprise buyers face a forced choice about which ecosystem they build on, with geopolitical alignment becoming a procurement criterion. Multi-model orchestration platforms like Perplexity become essential hedge infrastructure. AI security spending doubles as both ecosystems treat the other as an adversary.

**Probability: Moderate-high.** The pieces are already in place. The question is speed, not direction.

**Preparation moves:** Map your AI supply chain dependencies by geography. Identify which models, APIs, and infrastructure components would be affected by tightened export controls or access restrictions. Begin pilot deployments with at least two providers in different geopolitical risk categories.

Establish a 90-day provider migration capability as a strategic priority, not a theoretical exercise. **Scenario Two: The Orchestration Inversion.** Perplexity Computer's model-agnostic approach proves commercially successful.

Enterprise buyers adopt multi-model orchestration as a standard architecture. Frontier labs — Anthropic, OpenAI, Google — find themselves competing on price and performance benchmarks as interchangeable components rather than controlling the full user relationship. The value capture in AI shifts from model building to workflow orchestration, similar to how value in cloud computing shifted from hardware to platform services.

**Probability: Moderate,** emerging over twelve to eighteen months. **Preparation moves:** Evaluate your AI integrations for portability today. If switching from Claude to GPT-5 or Gemini would require more than API endpoint changes, you have architecture work to do.

Standardize on abstraction layers that let you swap underlying models without rebuilding workflows. Begin testing multi-model approaches on at least one high-value use case this quarter to build institutional knowledge before the market forces the transition. **Scenario Three: The Security Reckoning.

** An AI-augmented cyberattack hits critical infrastructure — healthcare, energy, financial systems — at a scale that makes the Fortinet breach look like a proof of concept.

Never Miss an Episode

Subscribe on your favorite podcast platform to get daily AI news and weekly strategic analysis.