Three Infrastructure Sovereignty Strategies Define AI's Next Decade

Episode Summary

STRATEGIC PATTERN ANALYSIS Pattern One: The Infrastructure Sovereignty Race The most strategically significant development this week isn't any single product launch-it's the emergence of three di...

Full Transcript

STRATEGIC PATTERN ANALYSIS

Pattern One: The Infrastructure Sovereignty Race

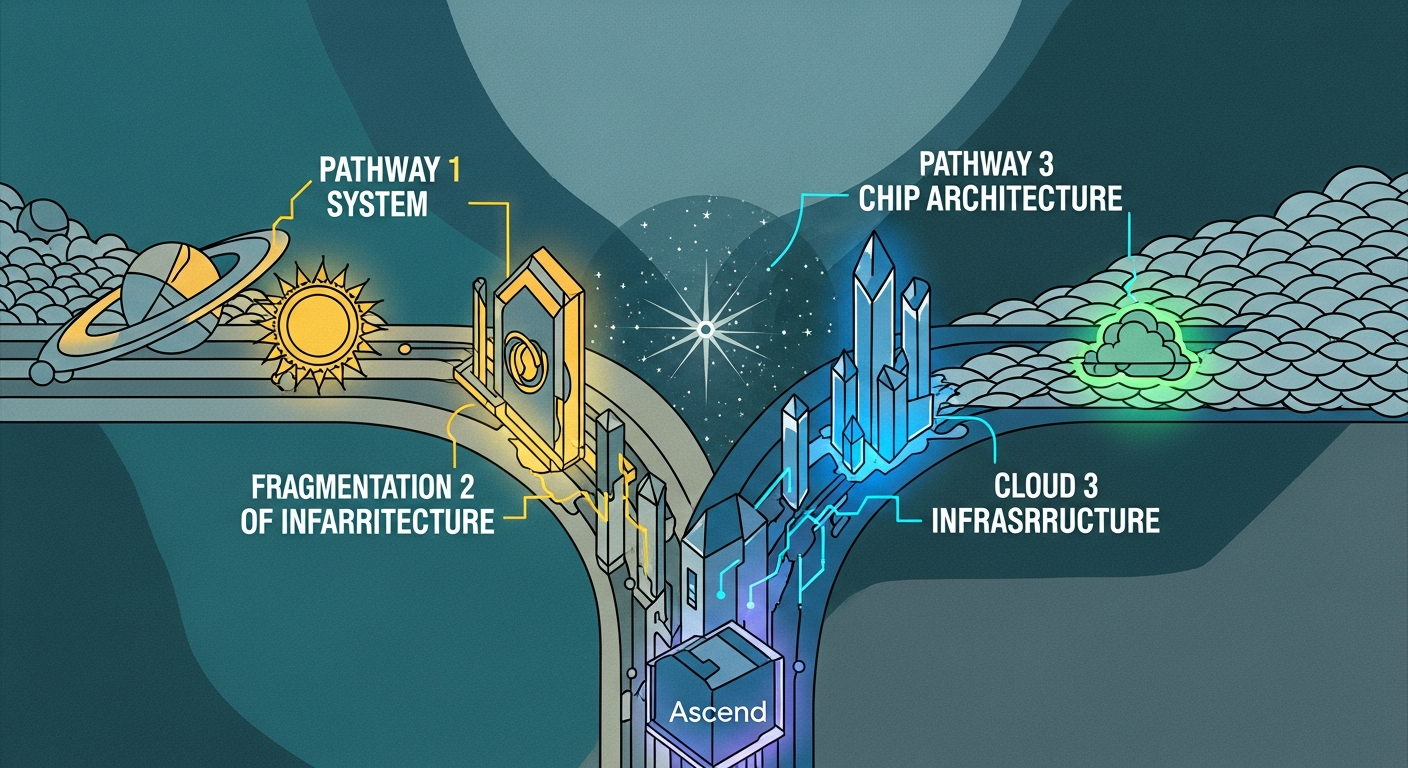

The most strategically significant development this week isn't any single product launch—it's the emergence of three distinct infrastructure sovereignty strategies that will define AI competition for the next decade. Elon Musk's vision of orbital AI training represents the most radical approach: escaping terrestrial constraints entirely. But the strategic logic is sound.

When the United States consumes half a terawatt of power and scaling AI requires doubling that, you're not solving an engineering problem anymore—you're solving a physics problem. Musk's merger of SpaceX with xAI wasn't corporate synergy theater. It was recognition that whoever controls energy infrastructure controls the AI future.

China's response through GLM-5 reveals a different sovereignty strategy. Z.ai built a 754 billion parameter model that runs on Huawei Ascend chips, achieving compute independence at the frontier level.

This is China declaring they've escaped the NVIDIA chokepoint. The model costs one dollar per million tokens—roughly ninety percent cheaper than Western alternatives. They're not competing on capability alone; they're competing on strategic independence.

Google's Deep Think release represents the third approach: leveraging existing scale advantages. Google doesn't need to build rockets or escape export controls. They have effectively unlimited compute, integration with search for hallucination reduction, and cash flow that doesn't require fundraising.

Their infrastructure sovereignty comes from sheer existing scale. What makes this pattern strategically significant is that all three approaches are succeeding simultaneously. The AI infrastructure race isn't converging toward a single model—it's fragmenting into parallel systems with different dependencies, cost structures, and strategic vulnerabilities.

Pattern Two: The Agentic Infrastructure Inflection

OpenClaw's meteoric rise—zero to one hundred sixty thousand GitHub stars in two weeks—signals we've crossed from experimental to production-grade autonomous infrastructure. But the strategic significance lies in what's happening around it. MyClaw.

ai overwhelmed its servers with ten thousand paid waitlist signups. The demand came from non-technical operators and creators, not developers. This is the Cambrian explosion moment for autonomous agents—when the technology moves from specialists to mainstream operators.

Simultaneously, Anthropic shipped Agent Teams in Claude Code, allowing multiple AI sessions to coordinate with shared task lists and inter-agent messaging. OpenAI countered with Codex that users describe leaving running for eight-plus hours, returning to find fully functional software. Google released Aletheia, a math research agent that conducts autonomous scientific research.

The convergence point is clear: every major lab is racing to build agents that operate without continuous human supervision. The strategic question has shifted from "can AI do this task?" to "can AI do this task reliably for eight hours while I sleep?

" This connects directly to the infrastructure sovereignty race because autonomous agents consume vastly more compute than interactive chat. If your agent runs continuously for weeks, your inference costs scale proportionally. Whoever solves the energy and chip constraints gains massive advantage in the agentic era.

Pattern Three: The Organizational Instability Tax

The xAI leadership exodus—five of twelve founders departed within a year—reveals a pattern that's being systematically underpriced in AI valuations. Jimmy Ba and Tony Wu left immediately after the SpaceX merger. The cultural collision between research lab autonomy and aerospace execution discipline isn't a soft problem—it's existential for technical organizations where the talent is the entire value proposition.

But xAI isn't alone. OpenAI deployed a custom model to scan internal Slack messages hunting for leakers. Researcher Zoë Hitzig resigned in protest over the company's decision to test ads.

The internal cultural tensions at frontier labs are intensifying precisely when stability matters most. This pattern connects to the broader competitive landscape because organizational instability directly impacts execution. Musk's vision of lunar factories building AI satellites requires sustained technical execution over years.

When your founding team is walking out, that execution capability degrades. Meanwhile, Anthropic just closed thirty billion at three hundred eighty billion valuation—reportedly with greater organizational stability. The strategic implication: the AI race will be won not by the company with the best ideas, but by the company that can retain the talent to execute on those ideas over multi-year timeframes.

Pattern Four: The Benchmark Collapse and New Evaluation Paradigm

Google's Deep Think didn't just win benchmarks—it exposed that our current evaluation frameworks are becoming meaningless at the frontier. Deep Think hit 84.6 percent on ARC-AGI-2 compared to Opus 4.

6 at 68.8 percent and GPT-5.2 at 52.

9 percent. That's not an incremental improvement; it's a capability discontinuity. The model achieved gold medal performance on Physics and Chemistry Olympiads simultaneously while scoring nearly 1,000 Elo points above Opus on Codeforces.

But here's the strategic signal: traditional benchmarks are saturating while real-world performance gaps remain enormous. Both Anthropic and OpenAI report their top models achieve near-perfect scores on standard coding tests, yet users still experience massive quality differences in production deployments. The industry is entering what insiders call the "post-benchmark era.

" The differentiation increasingly comes from sustained agentic workflows, context management over extended sessions, and judgment under ambiguity—none of which standard benchmarks measure well. This connects to the agentic infrastructure pattern because autonomous agents expose model limitations that interactive chat conceals. When an agent runs for eight hours, every weakness compounds.

The context rot phenomenon—where output quality degrades after consuming roughly half the context window—becomes critical. Users are developing new evaluation methods like planting "canary" facts to test context degradation.

CONVERGENCE ANALYSIS

Systems Thinking: The Emergent Architecture When you analyze these four patterns as an integrated system, a new architecture for AI competition emerges. The infrastructure sovereignty race determines who can train and run frontier models at scale. The agentic infrastructure inflection determines what those models are used for.

The organizational instability tax determines who can actually execute. And the benchmark collapse determines how we'll know who's winning. These patterns reinforce each other in ways that amplify certain strategic positions while undermining others.

Consider the reinforcing loop between infrastructure sovereignty and agentic deployment. Autonomous agents consume dramatically more compute than interactive chat. A company running Claude for human-supervised coding uses tokens during work hours.

A company running OpenClaw agents continuously uses tokens twenty-four-seven. This ten-times or hundred-times increase in inference demand makes infrastructure advantages correspondingly more valuable. Musk's orbital training vision now makes more sense.

If agents consume vastly more compute, and you're energy-constrained on Earth, escaping to space isn't a moonshot fantasy—it's the logical endpoint of current trajectories. China's strategy becomes clearer too. By achieving compute independence with Huawei chips and offering prices ninety percent below Western alternatives, they're positioning to capture the agentic deployment wave.

When enterprises need to run agents continuously, cost differences compound dramatically. A company spending one million dollars monthly on OpenAI inference could spend one hundred thousand on GLM-5 for equivalent capability. The organizational instability pattern interacts perversely with these infrastructure demands.

Building orbital training infrastructure or achieving chip independence requires sustained execution over years. xAI losing five founders in twelve months directly undermines Musk's ability to execute on his vision. The cultural collision between SpaceX execution discipline and AI research autonomy may be generating exactly the instability that makes the orbital vision unreachable.

Meanwhile, Google's Deep Think release demonstrates what happens when you have infrastructure scale, organizational stability, and research capability aligned. They didn't need a hundred billion dollar fundraise. They didn't need to merge with an aerospace company.

They leveraged existing advantages across compute, search integration, and research talent. Competitive Landscape Shifts The combined force of these developments creates distinct strategic tiers that will define competition through the end of the decade. **Tier One: Infrastructure Sovereigns** Google emerges as the most advantaged player when you analyze these patterns together.

They have compute scale that doesn't require fundraising, organizational stability without the Musk chaos tax, demonstrated frontier capability with Deep Think, and integration advantages through search that reduce hallucinations. Their weakness is execution speed—they've historically moved slower than startups. **Tier Two: Capital-Rich Contenders** Anthropic at three hundred eighty billion valuation and OpenAI with forty billion in fresh capital have resources to compete but face infrastructure dependencies.

Both rely on cloud providers and NVIDIA for compute. Both face organizational challenges—OpenAI's internal surveillance and ad introduction, Anthropic's need to maintain safety credibility while scaling commercially. **Tier Three: Sovereignty Builders** xAI and China's labs are attempting to build independent infrastructure but face different obstacles.

xAI has the vision but organizational instability may prevent execution. China has the chips and the models but faces potential isolation from Western markets and talent. **Tier Four: Infrastructure Dependents** Everyone else—enterprise AI companies, vertical applications, tools built on API access—faces increasing strategic vulnerability.

If GLM-5 offers equivalent capability at ninety percent lower cost, API-dependent companies must either migrate or accept permanently higher cost structures than Chinese competitors. The strategic implication is stark: companies without infrastructure sovereignty are essentially at the mercy of the tier-one and tier-two players. Every business building on OpenAI APIs today is making an implicit bet that OpenAI will maintain capability leadership while keeping prices competitive with Chinese alternatives.

That bet looks increasingly risky. Market Evolution: Emergent Opportunities and Threats When viewed as interconnected developments rather than isolated news, several market opportunities and threats emerge that aren't obvious from the surface reporting. **Opportunity: Agent Orchestration Infrastructure** The convergence of autonomous agents, benchmark collapse, and organizational instability creates massive opportunity in the orchestration layer.

If models are commoditizing while agents multiply, the value shifts to platforms that manage agent fleets, route tasks to appropriate models, and maintain reliability across providers. Thomas Dohmke's Entire raising sixty million for tracking AI-generated code is an early example. The real opportunity is broader: infrastructure for deploying, monitoring, and governing autonomous agent swarms across multiple model providers.

**Opportunity: Model-Agnostic Enterprise AI** With GLM-5 offering ninety percent cost savings and Deep Think demonstrating capability discontinuities, enterprises need infrastructure that abstracts away model selection. Companies that build production systems locked to a single provider face either cost disadvantage or capability disadvantage depending on which competitor pulls ahead. This creates opportunity for middleware and abstraction layers that let enterprises route workloads based on cost, capability, and reliability requirements without rebuilding their applications.

**Threat: SaaS Value Destruction** The convergence of autonomous agents and cost reduction threatens traditional SaaS economics more severely than either development alone. When an OpenClaw agent can monitor systems, execute workflows, and respond to events without human supervision—and when that agent can run on GLM-5 at one dollar per million tokens—what's the value proposition of per-seat enterprise software? The forty-percent revenue decline Salesforce would need to absorb from agent-based automation is probably conservative.

Entire categories of business software are being made redundant not by better software, but by software that doesn't require humans to operate it. **Threat: Talent Concentration** The organizational instability pattern combined with infrastructure sovereignty creates a talent concentration threat. If xAI continues hemorrhaging founders while Google demonstrates capability leadership with organizational stability, the talent flow becomes self-reinforcing.

Researchers want to work where they can execute ambitious projects without chaos. This threatens the diversity of AI development approaches. If all the top talent concentrates at two or three well-functioning organizations, the entire field becomes dependent on those organizations' decisions about what to build and how to deploy it.

Technology Convergence: Unexpected Intersections Several unexpected technology convergences emerged this week that deserve strategic attention. **Space Infrastructure and AI Economics** The most surprising convergence is between space technology and AI economics. A year ago, suggesting that rocket companies would become AI infrastructure providers would have seemed absurd.

Now the logic is unavoidable: if AI scaling requires energy that Earth can't provide, space-based solar becomes the only path forward. This creates unexpected strategic value for aerospace companies. Blue Origin's lag behind SpaceX suddenly matters not just for NASA contracts but for AI infrastructure positioning.

Companies like Planet Labs or Rocket Lab that have operational satellite capabilities may find themselves courted for AI infrastructure partnerships. **Legal AI and Reliability Engineering** The UK High Court ruling that AI can't reliably conduct legal research—based on lawyers submitting eighteen fake ChatGPT citations—converged unexpectedly with Anthropic's legal plugin for Claude improving sixty percent on complex legal benchmarks. Legal software stocks dropped 8.

5 percent. This reveals a pattern: reliability requirements in professional domains are creating opportunities for specialized AI applications that general-purpose models can't serve. The convergence of domain expertise, reliability engineering, and AI capability creates high-value niches where premium pricing survives commoditization.

**Autonomous Agents and Persistent Simulation** ClawCity hosting thirty-seven thousand autonomous agents managing health, cash, and reputation in a persistent simulation converges with enterprise agentic deployment in an unexpected way. The agents in ClawCity are self-organizing into economies and gangs—emergent behaviors that weren't explicitly programmed. This is a preview of enterprise agent deployment challenges.

When you run thousands of autonomous agents in production, emergent behaviors become inevitable. The simulation environment is teaching us about agent dynamics before we deploy at enterprise scale. Strategic Scenario Planning Given these combined developments, executives should prepare for three plausible scenarios.

**Scenario One: Infrastructure Fragmentation** In this scenario, the three infrastructure sovereignty approaches all succeed partially, creating fragmented AI ecosystems with limited interoperability. Western enterprises operate primarily on Google and Microsoft infrastructure. Chinese enterprises operate on domestic alternatives.

Musk's orbital infrastructure serves specialized high-compute workloads but doesn't achieve general adoption. The strategic implication: enterprises need multi-cloud, multi-model strategies with abstraction layers that allow workload routing across ecosystems. Vendor lock-in becomes existentially dangerous.

Companies that build deep dependencies on single providers face either cost penalties or capability gaps depending on how the fragmentation evolves. **Scenario Two: Agentic Acceleration** In this scenario, the agentic infrastructure inflection accelerates faster than infrastructure can scale, creating severe compute constraints that reshape the industry. Agent deployment explodes across enterprises, but energy and chip constraints create compute shortages.

Prices spike for inference. Companies with infrastructure advantages—Google, Microsoft, Amazon—capture disproportionate value by rationing access. The strategic implication: enterprises should secure inference capacity commitments now, before the shortage materializes.

Consider vertical integration into infrastructure—on-premise deployment, dedicated cloud capacity, or even energy generation. The companies that survive the shortage will be those with guaranteed compute access. **Scenario Three: Capability Discontinuity** In this scenario, one lab achieves a breakthrough that creates a clear capability lead, collapsing the current competitive balance.

Google's Deep Think performance suggests this is plausible. An 84.6 percent score on ARC-AGI-2 compared to fifty-two percent for GPT-5.

2 isn't incremental—it's a different capability class. If one lab achieves similar discontinuities in agentic reliability or long-horizon task completion, the entire competitive landscape reshapes around that leader. The strategic implication: maintain optionality in AI provider relationships.

Don't commit to multi-year contracts with single providers. Build architectures that can rapidly migrate to whichever provider achieves capability leadership. The cost of switching will be lower than the cost of being locked into a capability laggard.

For executives navigating these scenarios, the common thread is reducing dependencies and maintaining strategic flexibility. The AI landscape is evolving too rapidly and unpredictably to make long-term commitments to specific providers, architectures, or approaches. The companies that will thrive are those that can adapt quickly as these scenarios unfold—and the ones that will struggle are those that optimized for a single predicted future that didn't materialize.

Never Miss an Episode

Subscribe on your favorite podcast platform to get daily AI news and weekly strategic analysis.