Musk's Bombshell Testimony Exposes OpenAI's Nonprofit Mission Contradiction

Episode Summary

TOP NEWS HEADLINES The Musk v. Altman trial entered its second week with a bombshell moment: Musk's lawyer read Greg Brockman's personal journals aloud to the jury, including a 2017 entry where Br...

Full Transcript

TOP NEWS HEADLINES

Altman trial entered its second week with a bombshell moment: Musk's lawyer read Greg Brockman's personal journals aloud to the jury, including a 2017 entry where Brockman described OpenAI's public commitment to its nonprofit mission as, quote, "a lie." Brockman's defense: these were stream-of-consciousness self-doubt entries, not a master plan.

Following yesterday's coverage of the Anthropic developer conference, new details emerged: Anthropic is quietly developing "Orbit," a proactive briefing and insights system inside Claude and Claude Code that pulls personalized updates from connected work tools.

Following yesterday's coverage of GPT-5.5, new details emerged: analysis shows the model launched with a two-times price increase over GPT-5.4, though because it generates fewer completion tokens, the actual cost increase lands somewhere between 49% and 92%.

Both Anthropic and OpenAI announced rival private equity joint ventures on the same day — Anthropic's valued at $1.5 billion with Blackstone, Goldman Sachs, and Hellman & Friedman, while OpenAI's "Deployment Company" is targeting a $10 billion valuation with TPG, Brookfield, and Bain.

And Y Combinator quietly holds roughly 0.6% of OpenAI — a stake now worth over $5 billion — which adds an interesting financial lens to YC co-founder Paul Graham's very public defense of Sam Altman during the New Yorker trustworthiness story last month. --- DEEP DIVE ANALYSIS: The New Frontiers of AI Infrastructure — Space and Sea AI has a power problem.

Not a metaphorical one — a literal, physical, infrastructure problem.

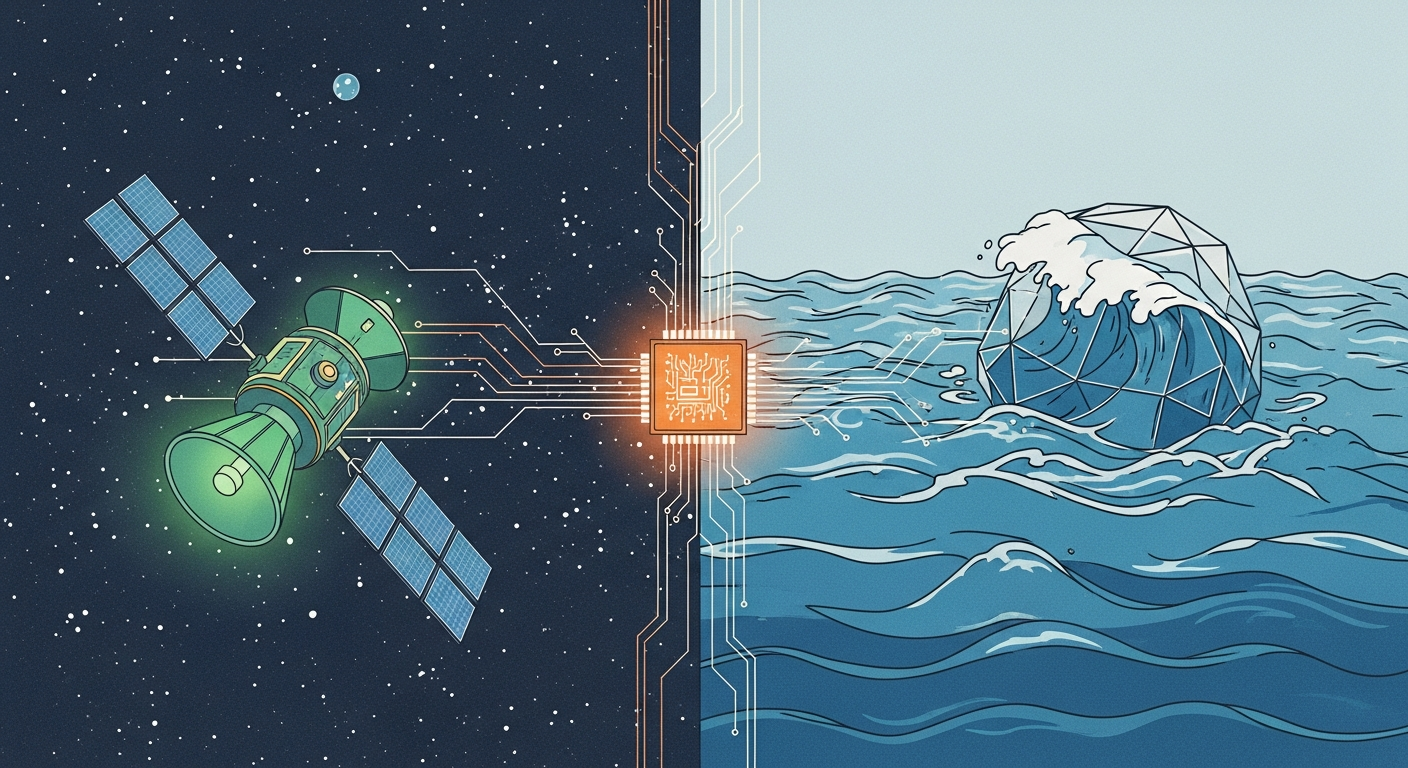

And this week, two very different bets emerged on where the next generation of compute actually gets built: one pointing skyward, one pointing offshore.

Let's break down what's actually happening, why it matters, and what executives need to do about it. **Technical Deep Dive** SpaceX broke ground on a new solar cell fabrication facility in Bastrop, Texas.

This isn't a standard solar farm — SpaceX is vertically integrating the entire manufacturing process to produce aerospace-grade solar arrays purpose-built for orbital deployment.

The goal is an orbital cloud network capable of handling AI workloads from space.

By controlling fabrication in-house, SpaceX insulates itself from global supply chain fragility while pushing the physical limits of solar cell efficiency and mass.

Meanwhile, Oregon-based startup Panthalassa just closed a $140 million Series B led by Peter Thiel, valuing the company at nearly $1 billion.

Their technology: 85-meter autonomous steel nodes that bob in open ocean, converting wave motion into electricity for onboard AI chips — all cooled naturally by seawater.

The nodes navigate to remote waters using only their hull geometry, no engines required, and beam results back via SpaceX's Starlink.

Two radically different approaches — one extraterrestrial, one hydrological — but both are attacking the same constraint: we are running out of places to put data centers. **Financial Analysis** The AI compute crunch is increasingly a real estate and energy story, not just a silicon story.

Communities are pushing back hard against data center construction — the noise, the water consumption, the grid strain.

That social friction is creating genuine infrastructure bottlenecks that no amount of chip investment can solve alone.

Panthalassa's $140 million raise at a near-$1 billion valuation tells you institutional capital is taking this seriously.

Peter Thiel told the Financial Times that "extraterrestrial solutions to compute are no longer science fiction" — and when Thiel speaks in that register, money follows.

This is the same pattern we saw with early cloud infrastructure: a niche that looked eccentric until it became the backbone of everything.

SpaceX's solar fab investment is harder to price because it's internal, but the strategic logic is identical.

Orbital compute means no permitting battles, no community opposition, no grid congestion.

The capital expenditure is massive upfront, but the operating environment is unconstrained.

For a company already manufacturing rockets at scale, solar cell fabrication is a natural vertical extension — and it positions SpaceX to offer infrastructure-as-a-service to every AI lab that can't build its own orbital network.

These are long-horizon bets, but the financial pressure behind them is immediate. **Market Disruption** The traditional hyperscaler model — Amazon, Google, Microsoft building massive land-based campuses — is hitting a ceiling.

That creates a genuine opening for alternative infrastructure models.

If Panthalassa delivers commercial nodes in 2027, it doesn't need to replace hyperscalers — it needs to serve the overflow.

AI inference demand is growing so fast that any additional compute, at any location, finds buyers.

If Panthalassa nodes communicate via Starlink, that makes SpaceX a critical supplier to ocean compute — and if SpaceX is simultaneously building its own orbital compute network, those two businesses start to look like a vertically integrated AI infrastructure stack that no existing cloud provider can replicate.

For the neocloud players — the Coreweave tier — this is both a threat and an opportunity.

They're already differentiating on infrastructure access.

The first neocloud to lock in a supply agreement with Panthalassa or position itself as an ocean-compute reseller has a story Wall Street will love. **Cultural and Social Impact** There's something worth pausing on here, which is why communities are pushing back against data centers in the first place.

Data centers consume enormous amounts of water for cooling, strain local power grids, and generate noise and heat.

In some regions, they've driven up electricity prices for residential customers.

Ocean and orbital compute sidesteps this friction almost entirely.

Panthalassa's wave-to-electricity conversion is a closed system — the ocean is both the energy source and the cooling medium.

That changes the political economy of AI infrastructure in a meaningful way.

Right now, every new data center announcement generates a news cycle of community resistance.

If compute moves offshore or into orbit, that friction largely disappears — and the speed at which AI infrastructure can scale accelerates dramatically.

Floating nodes in international waters raise real questions about regulatory jurisdiction, labor standards, and environmental impact assessment.

These aren't hypotheticals — they're the policy conversations that will happen in 2027 when Panthalassa's first nodes deploy. **Executive Action Plan** Three things you should be doing right now.

If your AI workloads run on a single hyperscaler, you are exposed to the same supply constraints driving this entire story.

Start mapping alternative compute providers — neoclouds, regional operators, and yes, keep an eye on the Panthalassa commercial timeline for 2027.

Diversification here isn't overcaution; it's basic supply chain hygiene.

Second, get ahead of the energy conversation internally.

Your board and your CFO will be asking about AI's energy footprint within 18 months — not because they're environmentalists, but because energy costs are becoming a meaningful variable in AI operating budgets.

Understand what your inference workloads actually cost in kilowatt-hours, not just dollars-per-token.

Third, watch the SpaceX orbital compute announcement cycle closely.

When SpaceX moves from solar fabrication to announcing orbital network capacity and pricing, that will be a signal moment — similar to when AWS announced EC2 pricing in 2006.

The companies that had a cloud strategy ready moved faster than the ones scrambling to develop one after the fact.

Don't be caught scrambling when orbital compute becomes a real procurement category.

The infrastructure layer of AI is being reinvented in real time.

The winners of the next decade aren't just the ones with the best models — they're the ones with access to compute when everyone else is waiting in line.

Never Miss an Episode

Subscribe on your favorite podcast platform to get daily AI news and weekly strategic analysis.