Demis Hassabis Regrets the Chatbot Era, Warns of Misdirection

Episode Summary

TOP NEWS HEADLINES Following yesterday's coverage of the Claude Mythos rollout pause, new details emerged: questions are now swirling about whether Anthropic's decision was a genuine safety measur...

Full Transcript

TOP NEWS HEADLINES

Following yesterday's coverage of the Claude Mythos rollout pause, new details emerged: questions are now swirling about whether Anthropic's decision was a genuine safety measure or a strategic move to protect its own market position — with TechCrunch asking directly whether the company was protecting the internet, or protecting itself.

Elon Musk's xAI just filed a federal lawsuit against Colorado, seeking to block the state's new AI consumer protection law. xAI argues that building an AI model is an expressive act protected by the First Amendment, and that forcing changes to training data amounts to government-mandated speech.

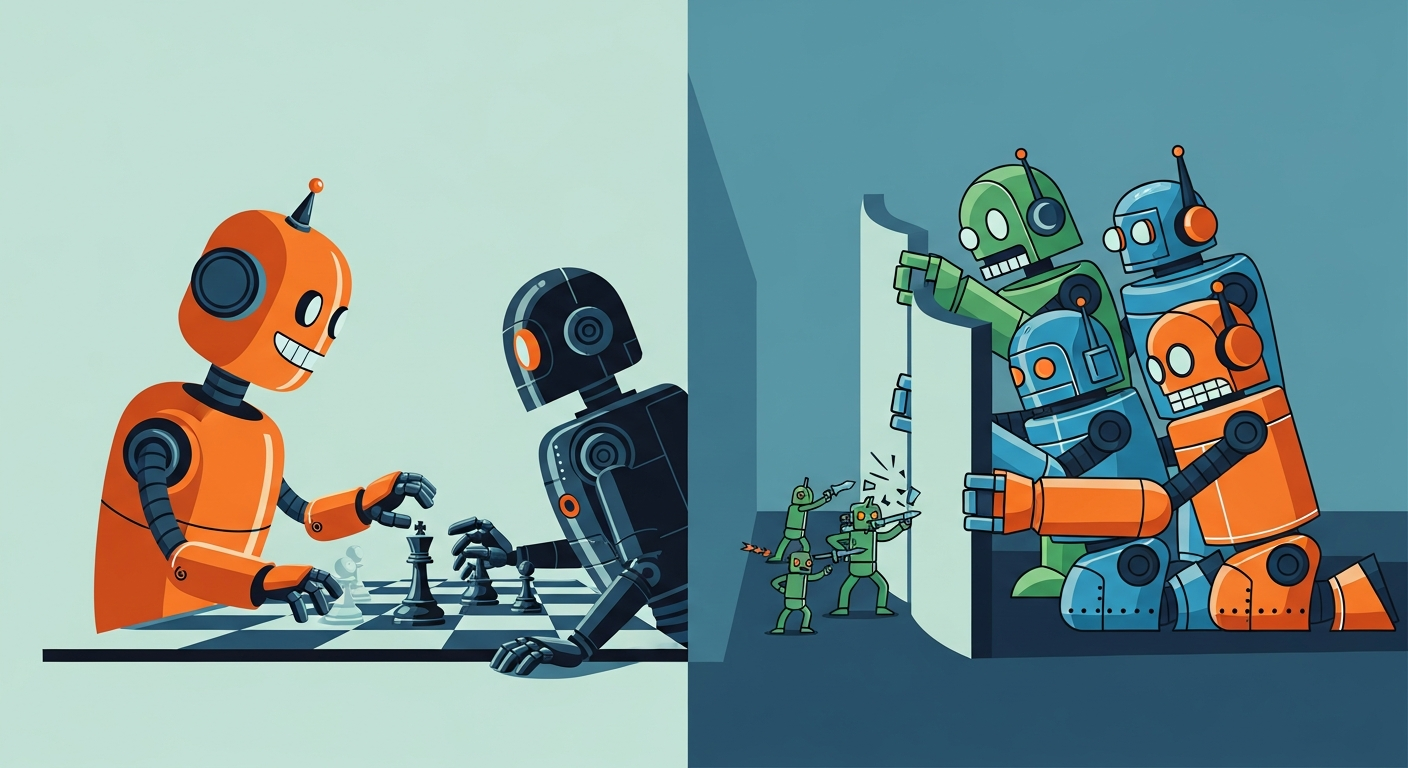

OpenAI, Anthropic, and Google are quietly teaming up through the Frontier Model Forum to stop adversarial distillation — the practice of extracting a powerful model's capabilities by bombarding it with questions and training a cheaper clone on the answers.

With Anthropic reportedly at a thirty-billion-dollar annual revenue run rate, the stakes are very real.

Nvidia's new Vera Rubin platform is outperforming its own Blackwell line on both power and cost, with seventy-three percent year-over-year revenue growth and enough inventory to carry demand through 2027.

LM Studio acquired Locally AI, the app that runs open-source models entirely on-device across Apple hardware — a clear signal that private, cross-device AI is graduating from hobbyist project to actual product category.

And Anthropic's new agent software triggered another software stock sell-off, as investors once again worried that AI agents are about to eat the SaaS stack. ---

DEEP DIVE ANALYSIS

**The Alternate Timeline Demis Hassabis Can't Stop Thinking About** Demis Hassabis gave an interview this week that, on the surface, looks like a CEO doing press. But if you actually listen to what he's saying, it's something closer to a confession — or at least a regret. The CEO of Google DeepMind said plainly that the AI boom did not unfold the way he wanted.

Not because it went wrong exactly, but because it went fast, in the wrong direction first. His ideal version? AI would have stayed in the lab longer.

It would have looked more like CERN — that sixteen-and-a-half-mile particle accelerator in Switzerland built through slow, collaborative, international science — than like a consumer app race. AGI would have advanced carefully in the background while specialized systems tackled the hard problems: disease, materials science, energy. More AlphaFolds.

Fewer chatbots. Instead, language turned out to be easier than expected. Transformers cracked abstraction faster than even optimists predicted.

OpenAI shipped ChatGPT, it went viral — reportedly even surprising OpenAI itself — and the entire field got sucked into what Hassabis called a "ferocious commercial pressure race," with geopolitics pouring accelerant on top. The alternate timeline is rattling around in his head, and he can't quite let it go. **Technical Deep Dive** Let's be precise about what Hassabis is actually saying technically.

He's not arguing that large language models are a dead end. He's arguing they arrived out of sequence. His original thesis, going back to DeepMind's founding, was that AI should first prove itself in narrow, high-value scientific domains — the way AlphaFold proved itself by predicting protein structures that stumped biologists for fifty years.

That system is now used by more than three million scientists and is embedded in the development pipeline for potentially most new drugs. The concern with jumping to general-purpose conversational AI first is that you're optimizing for human approval — responses that sound good, that feel helpful — before you've deeply solved for ground truth. You build systems that are very good at appearing correct before they're reliably correct.

The scientific path would have forced a different kind of rigor: your model either predicts the protein structure accurately or it doesn't. Biology doesn't grade on a curve. The commercial path optimizes for engagement.

That's not nothing — but it's a different objective function, and Hassabis is quietly arguing it shaped the whole field in ways that may take years to untangle. **Financial Analysis** Here's where the story gets complicated: the commercial pressure race Hassabis laments is also what funded everything. The Neuron's take on this is worth quoting almost directly — the surprise AI boom flooded the space with capital at a previously unimaginable rate.

And that capital is now funding the science Hassabis actually cares about. Anthropic is at thirty billion in annual recurring revenue. Google is spending hundreds of billions on AI infrastructure.

OpenAI is raising money at valuations that would have seemed absurd three years ago. Would AlphaFold 3 exist without ChatGPT lighting the fundraising fuse? Probably not at this pace.

Would the compute infrastructure needed to run frontier science models be buildable without the commercial subscription revenue subsidizing it? Almost certainly not. So there's a genuine tension here.

The chatbot era may have been the wrong first act scientifically, but it may have been the only financially viable path to the second act. Hassabis can hold both things at once — and to his credit, he does. He's not pretending the commercial wave was all downside.

He credits it with accelerating progress, giving the public hands-on access, and stress-testing these systems in ways a lab never could. **Market Disruption** What Hassabis is really describing is a market that got pulled by consumer demand rather than pushed by scientific roadmap. And that has competitive consequences that are still playing out.

The companies that won the first wave — OpenAI, Google, Anthropic, Meta — won by being fastest to consumer-grade language capability. The companies that may win the next wave could be the ones best positioned to apply AI to science, medicine, and physical-world problems. Those are different skill sets, different partnerships, different regulatory environments.

This is partly why the Frontier Model Forum's new anti-distillation coalition matters. When Anthropic, OpenAI, and Google team up to stop model cloning, they're not just protecting subscription revenue. They're protecting the research moats that took billions and years to build — moats that become far more valuable if the next decade really does shift toward scientific AI.

It also reframes the Mythos controversy. If the most important AI of the next decade is systems that identify vulnerabilities, design drugs, or model climate systems — not systems that write emails — then the safety questions get much harder, and the "is this safety or self-interest?" critique gets more complicated to answer.

**Cultural and Social Impact** There's something quietly important about a sitting AI CEO publicly saying the field moved too fast and in the wrong order. We are not used to that. The default posture in tech is relentless optimism.

Ship fast, fix later. The market decides. Hassabis is doing something different — he's applying a scientific ethic to a commercial product landscape, and finding the landscape wanting.

That matters culturally because it gives permission for a more honest public conversation. The chatbot era created enormous expectations: AI will replace your doctor, your lawyer, your accountant, your software engineer. Some of those expectations will be met.

Many won't, or not on the promised timeline. The gap between the marketing and the reality is where public trust erodes. If the field's most credible scientific voice is saying "we optimized for impressive demos before we optimized for ground truth," that should recalibrate how the public and policymakers evaluate AI claims.

Not with cynicism — Hassabis is genuinely optimistic about what's coming — but with more appropriate skepticism about what's here right now. **Executive Action Plan** Three moves worth making based on where Hassabis is pointing. First — if you're allocating AI budget, separate your chatbot spend from your scientific AI spend and track them differently.

Consumer-facing AI tools that summarize documents or generate copy are one category. AI tools that find patterns in your operational data, model risk, or accelerate R&D are another. The second category is where durable competitive advantage is most likely to compound.

Most organizations are dramatically overinvested in the first. Second — take the distillation threat seriously, even if you're not a frontier lab. The OpenAI-Anthropic-Google coalition is forming because model capabilities can be copied without inheriting safety guardrails.

If your organization is deploying AI in regulated or high-stakes domains, you need to be asking your vendors hard questions about where their model capabilities came from and what safeguards were built in versus bolted on. Third — use the Hassabis framing as a competitive lens. His argument is that the most important AI of the next decade will be the kind you barely see — systems embedded in drug discovery pipelines, materials research, infrastructure monitoring.

Whatever industry you're in, ask where the AlphaFold equivalent is coming. It may not arrive with a press release or a viral moment. It may just quietly make your current competitive advantage obsolete before you notice it's happening.

Never Miss an Episode

Subscribe on your favorite podcast platform to get daily AI news and weekly strategic analysis.