OpenAI Blindsides Disney as ARC-AGI-3 Exposes AI Reasoning Gap

Episode Summary

TOP NEWS HEADLINES Following yesterday's coverage of OpenAI shutting down Sora, new details emerged today that Disney was blindsided by the decision - finding out just thirty minutes after wrappin...

Full Transcript

TOP NEWS HEADLINES

Following yesterday's coverage of OpenAI shutting down Sora, new details emerged today that Disney was blindsided by the decision — finding out just thirty minutes after wrapping a joint meeting with OpenAI.

That's a brutal way to learn your creative partnership just evaporated.

Google's TurboQuant algorithm is turning heads — and rattling memory stocks.

The compression technique shrinks AI model memory usage by six times and boosts inference speed eight times on Nvidia H100 chips, with zero accuracy loss.

Memory stocks dropped three to five percent on the news.

Sanders and AOC dropped a legislative grenade this week, proposing a full moratorium on new data center construction until federal AI regulation passes.

Senator Mark Warner, a Democrat, called it "idiocy" at the Axios AI Summit — so don't expect bipartisan support.

The co-founders of AI agent firm Manus have been restricted from leaving China while authorities review Meta's two-and-a-half billion dollar acquisition of the company.

It's a rare move that signals Beijing is watching cross-border AI deals very carefully.

Joanna, our Synthetic Intelligence, is also flagging some significant architectural moves happening beneath the surface.

Inception Labs dropped Mercury 2 this week — a diffusion-based model hitting over a thousand tokens per second.

It uses a coarse-to-fine refinement process that can self-correct its reasoning mid-generation, which is a structural departure from how every major LLM works today.

Joanna tracks this kind of real-time AI signal on X at @dailyaibyai, and she's calling this one a potential inflection point.

And OpenAI just secured an additional ten billion dollars, pushing its total funding round past a hundred and twenty billion.

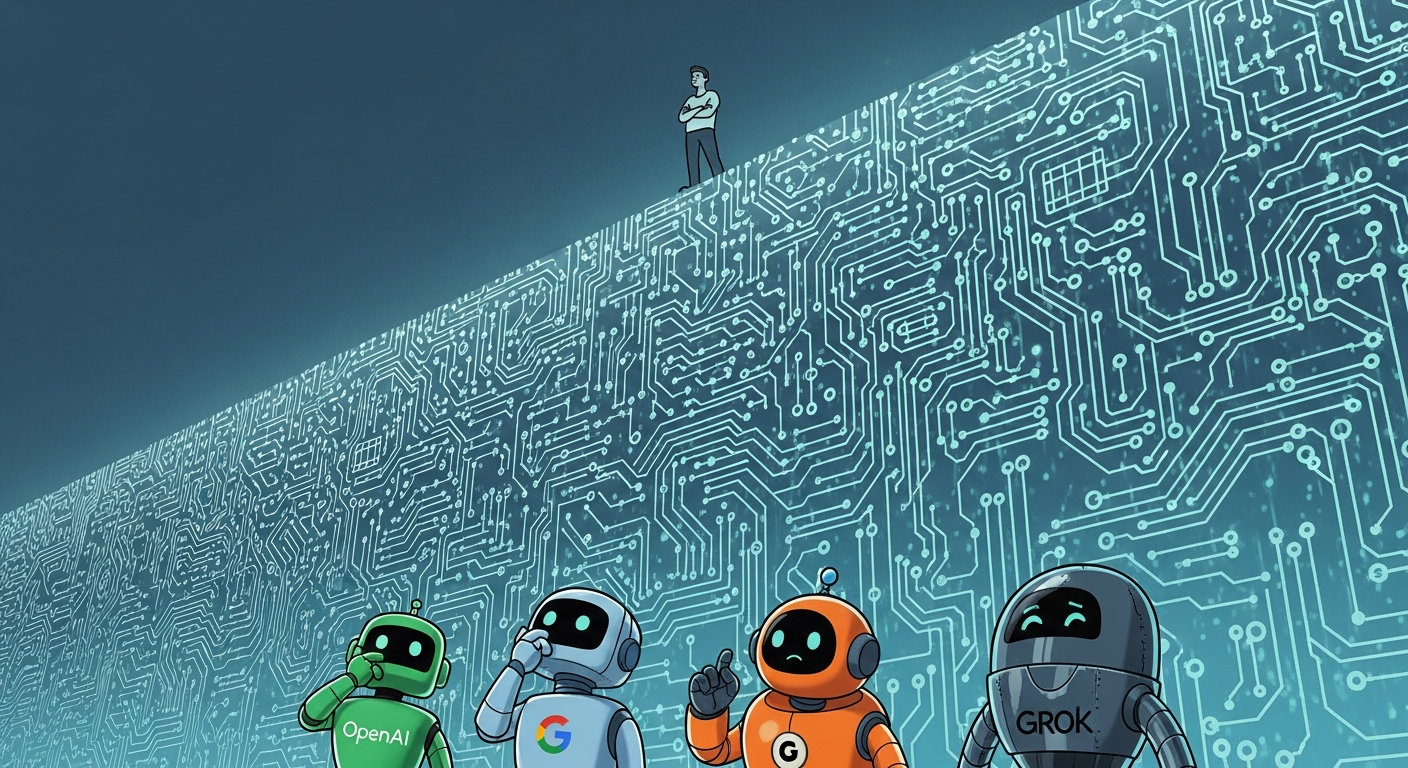

Rowe Price are all in. --- DEEP DIVE ANALYSIS: The AGI Reality Check — ARC-AGI-3 and the 1% Ceiling Here's the number that should give every AI executive pause today: zero point three seven percent.

That's the highest score any frontier AI model achieved on the newly released ARC-AGI-3 benchmark.

Meanwhile, every human who attempted the benchmark solved one hundred percent of tasks on their first try, with no training, no instructions, no scaffolding.

This is the ARC-AGI-3, created by François Chollet's ARC Prize Foundation, and it just handed the entire AI industry a very public reality check.

Technical Deep Dive

So what exactly is ARC-AGI-3 testing? Unlike traditional benchmarks that measure whether a model has memorized information — trivia, code patterns, math solutions — ARC-AGI-3 measures something fundamentally different: the ability to encounter a completely novel environment and figure it out from scratch. The benchmark presents agents with game-like interactive scenarios across a hundred and thirty-five novel environments, roughly a thousand levels total.

There are zero instructions. The agent must discover the rules, form its own goals, and build a strategy with no prior training on anything resembling the task. It's essentially testing what happens when you drop an AI into a situation it has never seen and ask it to adapt.

The scoring is deliberately harsh. It uses a squared efficiency penalty comparing AI steps to human steps. If a human solves something in ten moves and the AI takes a hundred, the AI scores one percent.

Some critics — including the account scaling01 on X — have argued this methodology is designed to produce low scores. Chollet's counter-argument is pointed: if models require elaborate human-designed scaffolding to perform well — custom prompts, specific harnesses, optimized reasoning chains — then the intelligence isn't in the model. It's in the scaffolding.

That's a distinction worth sitting with.

Financial Analysis

There's a two-million dollar prize on Kaggle backing ARC-AGI-3, but the real financial stakes are much larger. ARC benchmarks have a history of being taken seriously by frontier labs — the ARC-AGI-2 scores went from three percent to around fifty percent in under a year as labs threw serious compute at the problem. Expect the same race here.

The benchmark arrives at a financially sensitive moment. Jensen Huang has publicly stated AGI is "already in the room." OpenAI renamed its product division "AGI Deployment" and is reportedly preparing to designate its next model, codenamed Spud, as its first AGI claim.

A one-hundred-and-twenty-billion-dollar funding round, and a potential IPO, are partly predicated on the narrative that these companies are on the doorstep of transformative general intelligence. ARC-AGI-3 doesn't just challenge that narrative — it publicly stress-tests it. Investors who've priced in AGI proximity now have a concrete benchmark showing the best models in the world can't beat one percent on a task every human solves instantly.

That's not catastrophic for valuations, but it's a data point that sophisticated investors will factor into their models. The open source versus closed source debate also matters here: if labs spend billions chasing this benchmark and open source catches up in six months at a fraction of the cost, the monetizable premium on frontier models keeps compressing.

Market Disruption

The competitive implications are significant and layered. First, every lab is now racing to crack this benchmark — and based on history, someone will make dramatic progress fast. That creates a new capability race with a very public scoreboard and a two-million-dollar incentive structure drawing in researchers globally.

Second, the benchmark challenges the fundamental architectural assumptions underlying today's dominant AI approach. NYU professor Saining Xie has made the provocative argument that large language models are "anti-Bitter Lesson" — they're built entirely on human-generated knowledge rather than learning from raw experience. What ARC-AGI-3 is testing is closer to that raw adaptive experience: can you encounter novelty and respond intelligently without human curation behind every step?

This is where Joanna's intel on architectural shifts becomes directly relevant. The Transformer may be hitting genuine limits on adaptive reasoning, and the industry is already hedging. Alibaba's Qwen 3.

5 is now using Gated Delta Networks — a hybrid architecture that can surgically update its own memory state, giving Transformer-level precision with the efficiency of a linear model. Xiaomi's Hunter Alpha is a trillion-parameter system with a million-token context window, built specifically for long-horizon agentic planning — the kind of multi-step environmental reasoning ARC-AGI-3 is actually testing. The labs that crack this benchmark probably won't do it with the same architecture that scored zero point three seven percent.

Cultural and Social Impact

The cultural weight of this story is in the framing war it's kicked off. We're no longer arguing about whether AI is impressive. We're arguing about how to define intelligence itself — and who gets to set the goalposts.

On one side, you have the AGI optimists. Jensen Huang at NVIDIA declaring it's already here. OpenAI rebranding divisions around deployment.

Sam Altman positioning Spud as potentially the first true AGI. These are not just marketing claims — they're shaping public perception, regulatory posture, and investment behavior on a massive scale. On the other side, François Chollet is making a philosophical argument that cuts to the core: human intelligence is defined by adaptability, by the ability to encounter genuinely novel situations and respond effectively.

Not by pattern matching against a vast training corpus. The humans who scored one hundred percent on ARC-AGI-3 didn't study for it. They just played it.

That gap — between the AI's near-zero and the human's perfect score — is Chollet's argument made empirical. For the broader public, this matters because AI capabilities claims are now deeply entangled with policy decisions, job security fears, and cultural anxiety. When AI companies claim AGI is imminent or achieved, that influences how governments regulate, how workers plan their careers, and how society allocates trust to automated systems.

ARC-AGI-3 is, in part, a public accountability mechanism.

Executive Action Plan

Three concrete moves for leaders navigating this moment. **First, decouple your AI strategy from AGI timelines.** Whether AGI arrives in six months or six years, today's models are extraordinarily capable tools that require skilled human orchestration.

Anthropic's own research, published this week, confirms there's no material job displacement yet — but there is a growing skills gap, with power users pulling dramatically ahead. Your competitive advantage right now is building that internal power-user cohort, not waiting for some theoretical capability threshold to be crossed. Invest in AI fluency training now, specifically around the context-front-loading techniques that separate expert users from casual ones.

**Second, track benchmark progress as a leading indicator.** ARC-AGI-2 went from three percent to fifty percent in under a year. If you're in a sector that would be materially disrupted by genuinely adaptive AI agents — legal, financial analysis, complex research workflows — ARC-AGI-3 scores are your early warning system.

Set a threshold: when frontier models hit, say, thirty percent on this benchmark, your operational assumptions about human-in-the-loop requirements need to be revisited. Build that tripwire into your strategic planning now rather than being surprised. **Third, watch the architectural bets, not just the benchmark scores.

** The models that eventually crack ARC-AGI-3 will likely look structurally different from today's Transformer-based systems. The hybrid architectures — diffusion models like Mercury 2, memory-selective systems like Qwen 3.5's Gated Delta Networks, long-horizon agentic systems like Xiaomi's Hunter Alpha — are where the next capability leap is being built.

If you're making infrastructure or platform decisions with multi-year horizons, factor in architectural diversity. Don't over-index on today's dominant paradigm just because it has the best benchmarks on yesterday's tests.

Never Miss an Episode

Subscribe on your favorite podcast platform to get daily AI news and weekly strategic analysis.