OpenAI Launches GPT-5.4 Mini and Nano Subagents

Episode Summary

TOP NEWS HEADLINES Following yesterday's coverage of OpenAI's strategic pivot, new details emerged: Applications CEO Fidji Simo issued a company-wide "code red" memo naming Anthropic's enterprise ...

Full Transcript

TOP NEWS HEADLINES

Following yesterday's coverage of OpenAI's strategic pivot, new details emerged: Applications CEO Fidji Simo issued a company-wide "code red" memo naming Anthropic's enterprise dominance as an existential threat, with specific projects — Sora, the Atlas browser, and dedicated hardware — now formally deprioritized.

Internal engineers are now mandated to default to agentic workflows over traditional tools.

Following yesterday's coverage of NVIDIA GTC, new details emerged: Jensen Huang clarified in a Stratechery interview that reasoning latency — not raw generation speed — is now the primary constraint on AI utility, and NVIDIA announced expanded partnerships with Uber and Lyft to serve as the technical backbone for their robotaxi fleets.

Mistral launched Forge at GTC — a platform letting enterprises train full frontier-grade models from scratch on their own proprietary data, with zero exposure to Mistral's infrastructure.

Early partners include ASML, Ericsson, and the European Space Agency.

Microsoft is reorganizing its Copilot teams under former Snap exec Jacob Andreou, while CEO Mustafa Suleiman shifts focus entirely to building superintelligence in-house — a significant signal given Copilot's 6 million daily users versus ChatGPT's 440 million.

OpenAI launched GPT-5.4 Mini and Nano today — purpose-built subagents designed for high-volume, low-cost autonomous task execution, and that's our deep dive. ---

DEEP DIVE ANALYSIS

The Rise of Subagents: GPT-5.4 Mini, Nano, and the New Compute Economics Let's talk about what actually happened today with OpenAI's model release — because the framing matters enormously. This isn't just another "smaller, faster, cheaper" model announcement.

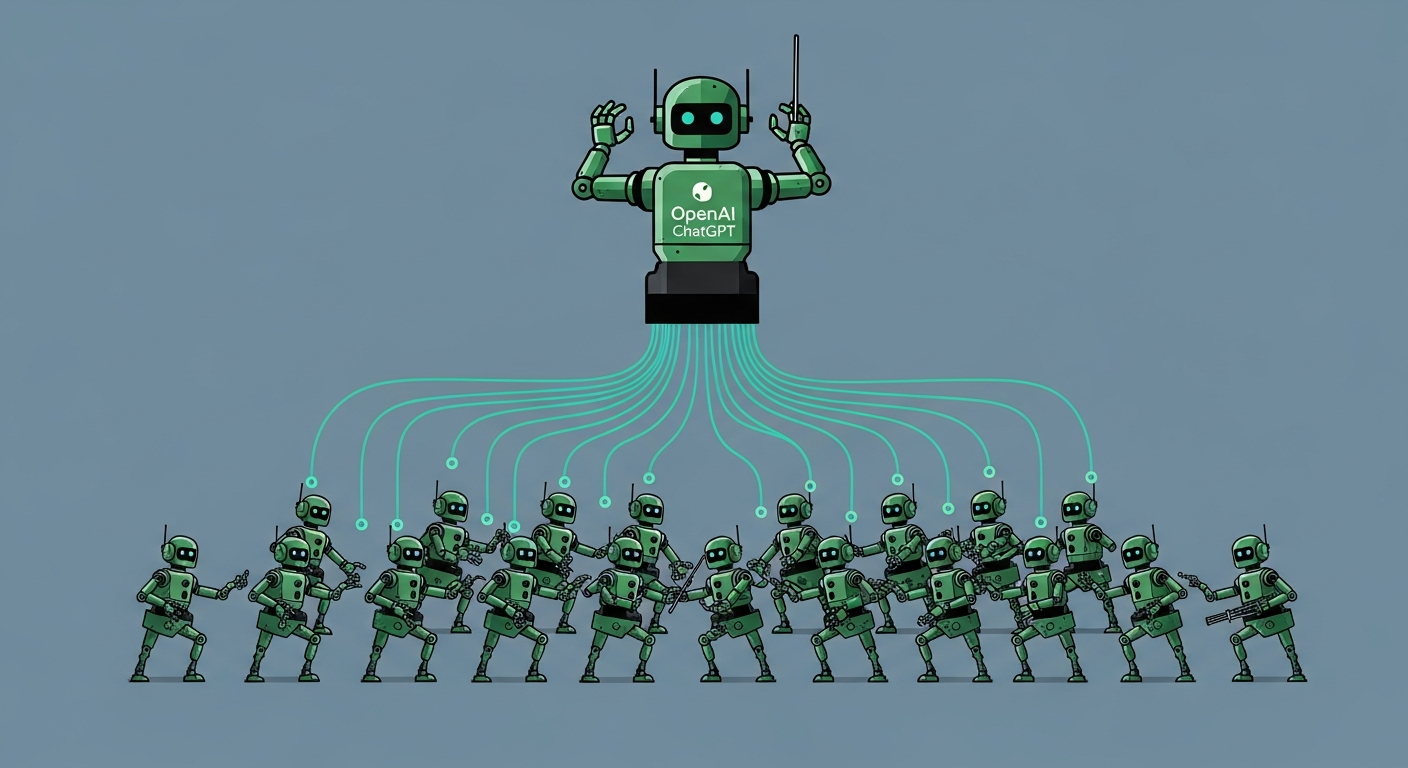

OpenAI is making a structural bet on how AI systems will actually be deployed going forward. The keyword here is *subagent* — and if you haven't internalized what that means yet, today is the day. **Technical Deep Dive** Here's the architecture: GPT-5.

4 — the full flagship — acts as the project manager. It plans, reasons, coordinates. Then it delegates parallel execution tasks to a swarm of GPT-5.

4 Mini or Nano instances. Search the codebase. Review that file.

Run those tests. Each mini instance is fast and cheap; collectively they get the job done without burning through the compute budget of the senior model. The benchmarks back this up.

GPT-5.4 Mini scores 54.4% on SWE-Bench Pro — that's just three points behind the full GPT-5.

4. On OSWorld computer-use tasks, it hits 72.1%, nearly matching the flagship.

Speed is over two times faster than the previous GPT-5 Mini generation. Nano comes in at just twenty cents per million input tokens — undercutting most of the market — and is currently API-only. What's technically significant here is the abstraction layer this creates.

You're no longer routing between models manually. The orchestrating model handles delegation. The developer just defines the task.

This is the software architecture of agentic AI becoming a real product pattern, not just a research concept. **Financial Analysis** Let's run the numbers on why this pricing matters. GPT-5.

4 Mini at seventy-five cents per million input tokens isn't actually the cheapest option in the market — Gemini 3 Flash comes in at fifty cents, and Claude Haiku 4.5 has competitive positioning. Mercury 2, the diffusion-based model from Inception Labs, generates tokens roughly in parallel and hits around twenty-five cents.

So OpenAI is not winning on raw price. What they're winning on is ecosystem lock-in. Mini is already live in the API, in Codex, and in ChatGPT's free tier through Thinking mode.

Nano is API-only for now, which is a classic land-and-expand move — seed adoption among developers, then push into the broader product surface. The Codex quota mechanic is particularly clever: Mini uses thirty percent of GPT-5.4's Codex allocation, meaning developers get three times the throughput for the same spend.

That's not a price cut — that's a volume play. With Codex already at two million weekly active users — quadrupled since January — OpenAI is monetizing the enterprise coding layer it nearly ceded to Anthropic. The IPO signal is real here: making ChatGPT a productivity tool generates the revenue multiples that a public market story requires.

**Market Disruption** This launch directly escalates the war with Anthropic, and the timing is not accidental. Claude Code has been eating OpenAI's lunch in enterprise developer workflows. Anthropic launched Cowork this week — a mobile-to-desktop handoff that lets Claude run tasks on your computer while you step away.

That's a direct play for the agentic workflow market. And now there are even hints circulating of an Anthropic "Codex killer" coming next week. What we're watching is a compression of competitive cycles.

Six months ago, model releases happened quarterly. Now we're seeing weekly capability leaps and direct product counterpunches. The subagent architecture from OpenAI means developers building multi-step autonomous pipelines now have a cost-effective way to scale horizontally — many cheap workers, one smart director.

That's a fundamentally different cost curve than running every task through a single premium model. The broader market disruption is this: the model router is being absorbed into the orchestration layer itself. That squeezes out third-party routing tools and consolidates more of the value chain inside OpenAI's platform.

Every developer who builds on Codex with Mini subagents is now deeper inside OpenAI's infrastructure, not sitting on top of it. **Cultural and Social Impact** Here's the shift that doesn't get enough attention: we are moving from AI as a *tool you use* to AI as a *workforce you manage*. The mental model is changing.

You don't prompt GPT-5.4 Mini the way you prompt ChatGPT. You define objectives, set constraints, and let a hierarchy of agents execute.

That's closer to managing a team than using software. For knowledge workers, this is both liberating and disorienting. The Neuron's framing — "your AI just hired its own interns" — is funny but accurate.

The senior model delegates. The junior models execute. And the human sits one layer above all of it, reviewing outputs rather than generating them.

That's a fundamentally different relationship with technology. For non-developers, the gap is still real. Codex is built for engineers.

Anthropic's Cowork is explicitly trying to bridge that gap for business users. The next six months will determine whether the agentic workflow becomes accessible to people who don't write code — or whether it remains a developer-only superpower. **Executive Action Plan** Three concrete moves if you're leading a technology or product organization right now.

First, audit your current AI spend against the new cost structure. If you're running any high-volume classification, extraction, ranking, or search tasks through premium models, you have an immediate cost reduction opportunity with Nano at twenty cents per million tokens. This is not a future consideration — the API is live today.

Second, pilot the subagent architecture in one real workflow within the next thirty days. Pick a multi-step process — code review, document processing, competitive research — and map which steps require senior reasoning versus fast execution. Then rebuild it with GPT-5.

4 as orchestrator and Mini as executor. Measure latency and cost. The benchmark numbers suggest you'll retain ninety-five percent of quality at a fraction of the spend.

Third, decide now whether your AI strategy is platform-native or model-agnostic. OpenAI's ecosystem integration — Codex, ChatGPT, API, desktop app — creates real switching costs. Anthropic is building competitive depth with Claude Code, Cowork, and Skills.

These ecosystems are diverging fast. Staying deliberately multi-vendor is a valid strategy, but it requires active architecture decisions, not passive drift. The subagent era has started.

The question is whether your organization is designing for it or still treating every AI interaction as a one-shot prompt.

Never Miss an Episode

Subscribe on your favorite podcast platform to get daily AI news and weekly strategic analysis.