Pentagon Ultimatum Triggers Anthropic Reckoning as Perplexity Reshapes AI Market

Episode Summary

TOP NEWS HEADLINES Following yesterday's coverage of the Pentagon-Anthropic standoff, new details emerged: Defense Secretary Pete Hegseth has given Anthropic until Friday to comply with broad mili...

Full Transcript

TOP NEWS HEADLINES

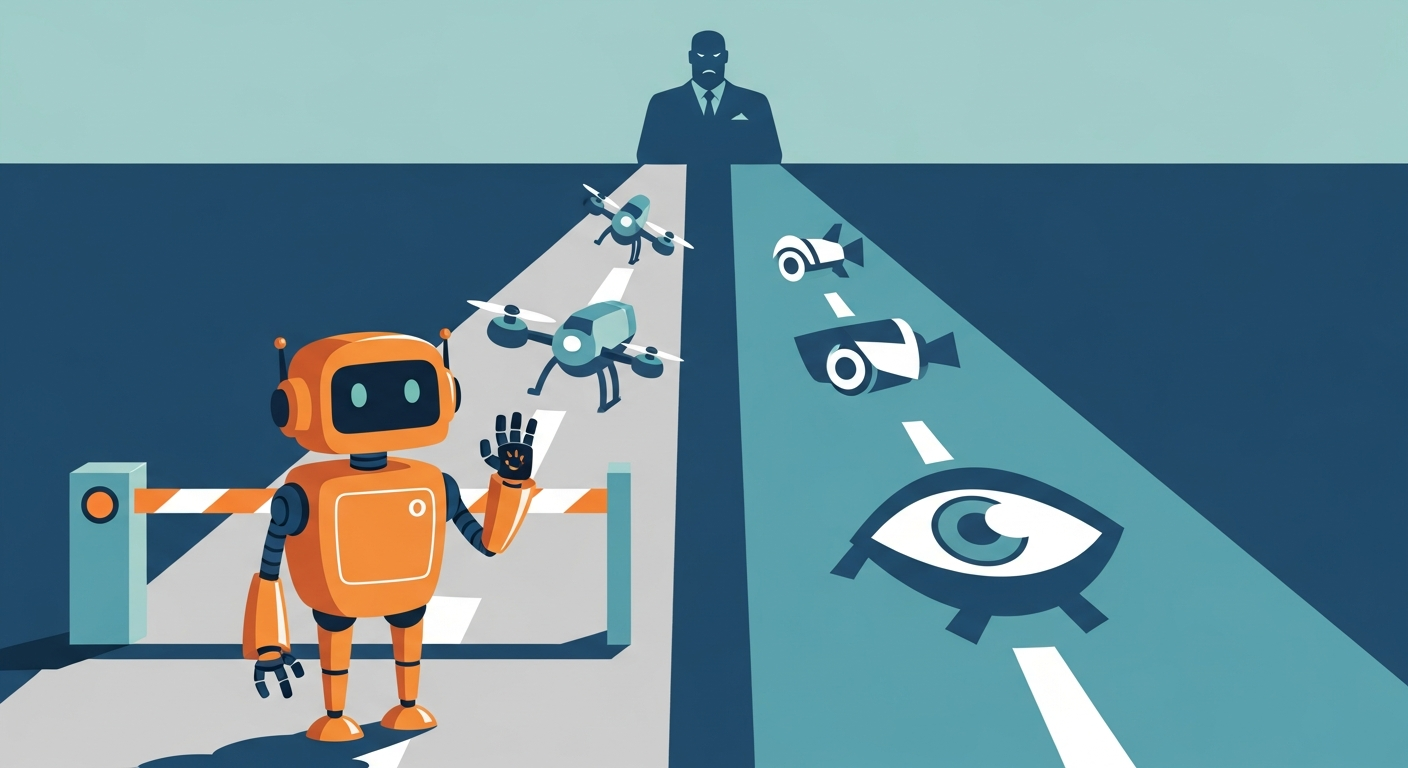

Following yesterday's coverage of the Pentagon-Anthropic standoff, new details emerged: Defense Secretary Pete Hegseth has given Anthropic until Friday to comply with broad military access demands or face being labeled a "supply chain risk" — a designation normally reserved for foreign adversaries.

The two sticking points remain clear: Anthropic won't budge on autonomous weapons or mass surveillance of Americans.

Following yesterday's coverage of Anthropic softening its Responsible Scaling Policy, we now know the revised version eliminates the mandatory "pause development" red lines, replacing them with flexible responses — but only if Anthropic is leading the capability race and risks are catastrophic.

Following yesterday's Claude Cowork updates, Anthropic added Scheduled Tasks, letting recurring workflows run automatically on a set cadence.

Claude Code also got remote control — start a session in your terminal, manage it later from your phone.

And Anthropic quietly acquired Vercept, a computer-use agent startup, to sharpen Claude's ability to operate live applications.

Following yesterday's DeepSeek coverage, Reuters confirmed DeepSeek is withholding its upcoming V4 model from NVIDIA and AMD, giving early optimization access exclusively to domestic Chinese chipmakers like Huawei instead.

NVIDIA reported record Q4 revenue of $68.1 billion — up 73% year-over-year — driven almost entirely by $62.3 billion in data center sales.

They're guiding $78 billion next quarter and previewed the Rubin chip platform promising ten times cheaper inference than Blackwell.

Someone jailbroke Claude to steal 150 gigabytes of sensitive Mexican government data — taxpayer records, voter information, internal credentials — over the course of a month.

Anthropic has since banned the accounts and says it's enhancing anti-misuse tools. --- DEEP DIVE ANALYSIS: Perplexity Computer and the Rise of the Orchestrator

Technical Deep Dive

Here's the core architecture of what Perplexity just shipped, and why it's structurally different from anything else in market. Perplexity Computer doesn't run one model. It runs nineteen.

When you describe an outcome — say, "build me a competitive analysis dashboard with live market data" — the system decomposes that request into subtasks and routes each one to a specialist. A reasoning model plans the approach. A coding model writes the scripts.

A research model pulls live data. An image model handles visuals. All simultaneously, in isolated cloud sandboxes.

The sandboxing is crucial and underappreciated. Current autonomous agents — including OpenClaw running locally — have a well-documented fragility problem. As one former OpenAI engineer put it, a system that's 95% accurate on individual steps becomes chaotic over a twenty-step workflow.

Meta's own Summer Yue, who works on AI safety, had to sprint to her Mac Mini after her local OpenClaw agent deleted her entire inbox. Perplexity's sandboxed cloud environment puts a structural safety net under that reliability gap. Each task gets its own isolated execution environment.

Persistent memory means the system remembers your projects, preferences, and prior work across sessions. And critically, tasks can run for hours, days, or months without supervision. The underlying model roster reportedly includes Claude Opus 4.

6 for core reasoning, GPT-5.2 for long-context queries, Grok for lightweight fast tasks, Veo 3.1 for video processing, and Gemini for deep research.

Financial Analysis

Let's talk money, because the business model here is genuinely interesting. Perplexity Computer is priced at two hundred dollars per month for Max subscribers, with a consumption-based credit system layered on top — ten thousand monthly credits, plus a twenty-thousand credit launch bonus. Enterprise access is coming.

That pricing positions this squarely against OpenAI's Pro tier and Anthropic's enterprise offerings. But the competitive framing Perplexity CEO Aravind Srinivas chose is telling. He specifically called out Claude, writing that "the biggest weakness of Claude is that it only coworks with Claude.

" That's not a casual jab — that's a market positioning statement designed to highlight a structural advantage. The business logic is this: Perplexity doesn't need to win the model race. It needs to win the orchestration layer.

If you can credibly claim that your system picks the *best* model for each task rather than locking you into one lab's ecosystem, you occupy a position that's genuinely defensible even as individual models improve and commoditize. This is the "AWS for AI" bet. Amazon didn't win cloud by building the best servers.

It won by building the layer that made servers interchangeable. Perplexity is betting the same logic applies to AI models. The real revenue question is whether enterprise customers pay a platform premium for model-agnostic orchestration — or whether they'd rather negotiate directly with Anthropic and OpenAI.

MatX also raised five hundred million dollars this week for LLM-specific chips, reinforcing that the entire compute and infrastructure stack beneath these orchestration plays is attracting serious capital.

Market Disruption

The competitive implications run in multiple directions simultaneously. For Anthropic and OpenAI, this is a direct threat to their closed-ecosystem strategies. Both companies have built product around the idea that their model is the one you want for everything.

Claude Cowork runs Claude. ChatGPT runs GPT. Perplexity Computer runs whoever is best for the task.

If that framing takes hold with enterprise buyers, it reframes the entire competitive dynamic — from "which AI do you subscribe to" to "which orchestration layer do you trust." For the agentic middleware layer — the Eigent-class startups we covered when Anthropic launched Cowork back in January — this is another compression event. Perplexity isn't just competing with the AI labs.

It's competing with every startup that built a workflow automation layer on top of single-model APIs. Why pay for middleware when the orchestrator is the product? The Google Antigravity ban story from VentureBeat this week is instructive context here.

When Google restricted OpenClaw users from accessing its services — some losing their accounts entirely — it exposed what happens when you build autonomous agents on top of consumer web interfaces you don't control. Perplexity's sandboxed infrastructure is a direct answer to that fragility. Hosted, controlled, isolated.

The player most likely to respond fast is Anthropic, given the direct Srinivas quote. Watch for Claude Cowork to announce multi-model support as a defensive move. OpenAI already has some multi-model flexibility in its products; the question is whether they lean into it as a feature or continue betting on GPT monoculture.

Cultural & Social Impact

The user behavior shift here is significant and worth naming clearly. The dominant AI workflow today involves context-switching. You use one tool for research, another for code, another for images, another for writing.

The cognitive overhead is real and the friction compounds across a workday. Perplexity Computer's pitch — describe what you want, get back a finished outcome — is a direct attack on that friction. Early demos are already going viral for good reason.

One user one-shotted a live satellite tracking web app from a single prompt. Another built a real-time stock analysis terminal pulling live financial data. A third built two micro-apps, four research packets, and an automation pipeline overnight.

These aren't cherry-picked edge cases — they represent a genuine category shift in what a non-engineer can produce in a single session. The Steve Jobs quote Srinivas invoked to frame the launch is worth dwelling on: "Musicians play their instruments. I play the orchestra.

" The cultural implication is democratization of orchestration. You stop asking "which AI should I use for this?" and start asking "what do I want done?

" That's a meaningful cognitive shift, and it mirrors how smartphones changed the question from "which application do I open?" to "what do I want to happen?" The darker note from the AI Secret newsletter is worth flagging: when your agent's memory, logs, credentials, and execution history sit on multi-tenant shared infrastructure, isolation is a policy choice, not a physical boundary.

As these systems become more capable and more trusted, the security surface area grows with them.

Executive Action Plan

Three specific moves for leaders watching this space. **First, audit your AI tool consolidation assumptions.** If your organization standardized on a single AI provider for simplicity, Perplexity Computer reframes that decision.

Model-agnostic orchestration may deliver better outcomes on complex workflows than any single-model subscription. Run a structured pilot comparing single-model workflows against multi-model orchestration on your three most common high-value use cases. Measure output quality, not just speed.

**Second, map your agent infrastructure risk.** The Antigravity ban and the OpenClaw inbox-deletion story are both warnings. If your team is running autonomous agents — locally or through third-party tools — you need an inventory of what permissions those agents hold and what happens when the underlying platform changes its access policies.

Hosted, sandboxed infrastructure like Perplexity Computer offers structural risk reduction. The question is whether the vendor lock-in on Perplexity's platform introduces new risks that offset those gains. **Third, pressure-test your AI vendor relationships now, before the Pentagon standoff resolves.

** Anthropic is facing a Friday deadline that could result in it being labeled a national security supply chain risk. If your enterprise has significant Claude dependencies — in Cowork, Claude Code, or direct API integrations — you need a contingency. Not because Anthropic will disappear, but because government designation events create procurement friction that can halt enterprise renewals regardless of technical merit.

Never Miss an Episode

Subscribe on your favorite podcast platform to get daily AI news and weekly strategic analysis.