AI-Powered Hackers Breach 600 Firewalls Across 55 Countries

Episode Summary

TOP NEWS HEADLINES Following yesterday's coverage of the India AI Impact Summit awkward handshake moment, new details emerged: over 70 countries officially signed the "Delhi Declaration" on AI gov...

Full Transcript

TOP NEWS HEADLINES

Following yesterday's coverage of the India AI Impact Summit awkward handshake moment, new details emerged: over 70 countries officially signed the "Delhi Declaration" on AI governance, while Demis Hassabis predicted AGI within five years — half his previous estimate — and Sam Altman went even further, claiming superintelligence is just a couple of years away.

Meanwhile, the White House flatly rejected global AI governance, with official Michael Kratsios stating, "We totally reject global governance of AI." Amazon revealed that a small group of Russian-speaking hackers used commercial AI tools to breach over 600 Fortinet firewalls across 55 countries in a matter of weeks — a scale Amazon says would have been impossible without AI assistance.

OpenAI quietly scaled back its compute spending target from 1.4 trillion dollars down to 600 billion by 2030, while simultaneously projecting 280 billion dollars in revenue.

A Cambridge-led study found that only 4 of 30 top AI agents published formal safety evaluations, with browser agents — the most autonomous category — missing 64% of required safety disclosures.

Anthropic launched Claude Code Security, a tool that scans codebases for vulnerabilities and auto-suggests patches, and NVIDIA open-sourced DreamDojo, a robotics world model trained on 44,000 hours of human video. ---

DEEP DIVE ANALYSIS

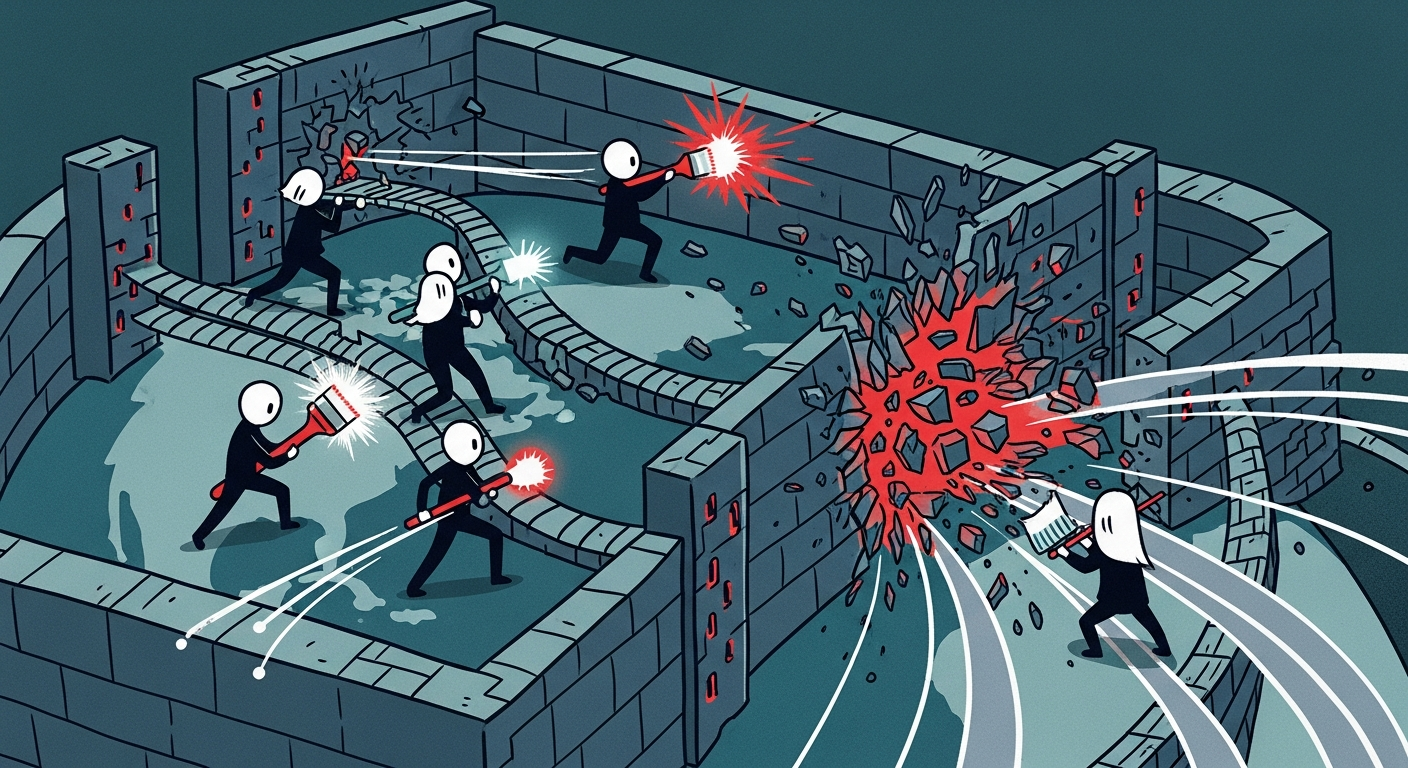

The Hacker Just Got a Co-Pilot: AI-Augmented Cyberattacks Are Here Let's talk about the Amazon firewall breach story, because I think it's the most underreported and consequential story in today's news cycle. A small group of Russian-speaking hackers used off-the-shelf commercial AI tools — nothing exotic, nothing classified — to compromise over 600 Fortinet firewalls across 55 countries in a matter of weeks. Amazon's own security team said explicitly: this scale of attack would have been impossible without AI.

Let that sink in for a second. We spend a lot of time talking about AI making developers more productive, making marketers more efficient. We don't talk nearly enough about what happens when that same productivity multiplier lands in the hands of adversaries.

**Technical Deep Dive** Here's what actually happened technically. The attackers exploited weak passwords and exposed management ports on Fortinet FortiGate devices — these are enterprise-grade firewalls used by companies and governments worldwide. That part isn't new.

What's new is the operational tempo. Traditionally, a small team of hackers scanning for vulnerable devices, crafting exploit attempts, and managing hundreds of simultaneous intrusions would take months. AI tools compressed that timeline dramatically.

These systems can automate reconnaissance — identifying exposed ports at scale, correlating vulnerability databases, generating customized exploit payloads, and even managing the post-breach persistence across hundreds of targets simultaneously. What used to require a large, well-funded nation-state operation can now apparently be executed by a small group using commercial tools you can access today. The attack surface hasn't changed.

The speed and scale of exploitation has changed fundamentally. **Financial Analysis** The financial implications here operate on two levels. First, there's the direct cost of this specific breach — forensics, remediation, potential regulatory penalties across 55 countries, and reputational damage for organizations running Fortinet infrastructure.

But the bigger financial story is what this signals for the cybersecurity market broadly. The security industry has been growing steadily, but it's been growing at human speed. Threat actors just got access to machine speed.

That gap is now an enormous market opportunity. Expect accelerated investment in AI-native security platforms — companies like CrowdStrike, Palo Alto Networks, and a wave of startups are going to see increased demand and increased valuations. Cyber insurance premiums are going up.

Compliance costs are going up. And critically, any enterprise that has been treating AI security investment as a future concern rather than an immediate one just got a very expensive wake-up call. The Fortinet breach is effectively a forcing function for security budget conversations happening in boardrooms right now.

**Market Disruption** This attack reshapes the competitive dynamics of the entire cybersecurity sector. Traditional security vendors built their products around human-speed threat models — signature-based detection, scheduled audits, human analyst review cycles. Those architectures are now fundamentally mismatched against AI-augmented adversaries operating at machine speed.

The companies that win in this new environment are the ones offering continuous, AI-driven behavioral monitoring that can detect anomalies in real time rather than in retrospect. There's also a significant disruption coming for managed security service providers. Their value proposition has been selling human expertise at scale.

When the threats they're defending against are being orchestrated by AI systems that don't sleep, don't get tired, and can manage thousands of attack vectors simultaneously, the human-hours model breaks down. Expect consolidation in the MSSP space and a rapid pivot toward AI-native security operations. And for enterprise buyers, this is accelerating the zero-trust architecture conversation from strategic initiative to operational emergency.

**Cultural & Social Impact** The cultural implications of this story go beyond the enterprise. We're entering a period where the asymmetry between attack and defense is fundamentally changing in ways that affect everyone. Critical infrastructure — hospitals, power grids, water systems — runs on networked devices similar to the firewalls compromised here.

When AI tools lower the barrier for conducting large-scale cyberattacks to the point that a small group can breach 600 organizations across 55 countries, the implicit social contract around digital safety starts to crack. There's also a profound workforce implication. The Cambridge study released today found that most AI agents lack formal safety evaluations.

We're deploying autonomous systems into sensitive environments — enterprise networks, healthcare, finance — without adequate safety frameworks, while simultaneously those same environments face AI-augmented threats. That's a dangerous combination. Policymakers, regulators, and security practitioners need to internalize that the democratization of AI capability is not a neutral force — it empowers defenders and attackers equally, and right now the attackers appear to be moving faster.

**Executive Action Plan** Three concrete actions for executives this week. First, audit your exposed attack surface immediately — specifically management interfaces, remote access portals, and any internet-facing infrastructure that relies on password authentication. The Fortinet breach exploited weak passwords and exposed ports.

These are fixable right now. Mandate MFA on everything external-facing, full stop. Second, accelerate your AI security investment conversation.

If your current security budget was set before the AI-augmented threat era was a demonstrated reality, it was set for a different world. Bring your CISO into a board-level conversation this quarter specifically framed around AI-speed threats versus your current detection and response capabilities. Third, demand safety evaluations from every AI vendor in your stack.

The Cambridge study showing only 4 of 30 top AI agents published formal safety evals is a red flag for any enterprise integrating agentic AI. Before your next AI deployment, require documented safety evaluations as a procurement condition — not as a nice-to-have, but as a non-negotiable vendor requirement.

Never Miss an Episode

Subscribe on your favorite podcast platform to get daily AI news and weekly strategic analysis.